April 28, 2008

BCI, AGI & WBE

Accelerating Future » Japanese BCI Research Enters the Skull discusses the possibility of intelligence amplification using brain-computer interfacing (BCI). Michael Anissimov thinks this is much harder than creating artificial general intelligence (AGI) and worries that it will lure people from AGI to BCI because it may help us rather than some constructed mind children. Roko takes up the thread in Artificial Intelligence vs. Brain-computer interfaces and claims that AGI is the easier and safer path to superintelligence.

Accelerating Future » Japanese BCI Research Enters the Skull discusses the possibility of intelligence amplification using brain-computer interfacing (BCI). Michael Anissimov thinks this is much harder than creating artificial general intelligence (AGI) and worries that it will lure people from AGI to BCI because it may help us rather than some constructed mind children. Roko takes up the thread in Artificial Intelligence vs. Brain-computer interfaces and claims that AGI is the easier and safer path to superintelligence.

I think both of my friends are missing the point: BCI and AGI aim at different things, use different resources and are competing with another acronym, WBE.

Roko argues that the brain will be a mess to connect to; no quarrel with that. But the scope of the problem is relatively limited: BCI is currently all about adding extra interfaces, essentially new I/O abilities. This is what it will be about for a very long time. If it develops well we will first get working I/O so that blind and deaf people can see and hear reasonably well, that paralysed people can move and neuroscientists can get some interesting raw data to think about. Later on the bandwidth and fidelity will increase until all sensory and motor signals can be intercepted and replaced. Beyond that interfaces would enable interfacing between other brain regions and computers, enabling information exchange at higher levels of abstraction. It is first at this point where serious intelligence amplification even begins to be possible. The previous stages might enable quick user interfaces for smart external software, but they would hardly be orders of magnitude better than using external screens, voice recognition or keyboards.

The community developing BCI does not appreciably overlap with the artificial intelligence community except perhaps for a few computational neuroscientists. In fact, the context for BCI development involves medical engineering, the legal, corporate and economic context of hi-tech medicine and much work on implant engineering and signal processing. Meanwhile AGI is a subset of AI, and exists within a software-focused community funded in entirely different ways. It is pretty unlikely these fields compete for money, brains or academic space. The only place where they compete is in transhumanist attention.

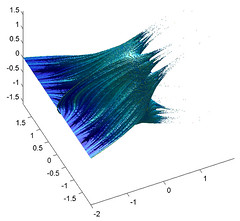

However, there is a third way towards superintelligence. That is building on whole brain emulation (WBE). WBE is based on scanning a suitable brain at the right resolution, then running an emulation of the neural network (or whatever abstraction level works). Leaving aside issues of personal continuity and philosophy of mind, if WBE succeeds in making a software emulation of a particular human brain, we would stand at the threshold of a superintelligence transition very different from the super-smart AI envisioned in AGI or the augmented cyborg of BCI. The reason, as Robin Hanson pointed out, is that now we have copyable human capital. The possibility of getting a lot of brainpower cheaply changes things enormously (even a fairly expensive computer is cheaper than the cost of educating a person for a skilled job like, say, lawyer or nuclear physicist). While individual emulated minds are not smarter than the original, the possibility of running large (potentially very large) teams of the best people for the task can allow them to solve many super-hard problems (see forthcoming paper by me and Toby Ord on that).

WBE is hard, but a different kind of hard from BCI and AGI. In BCI we need to make a biocompatible interface and then figure out how to signal with the brain - it requires us to gain a lot of experience with neural representations. These may be different from individual to individual. In AGI we need to figure out the algorithm necessary for open-ended intelligence, and then supply it with enough computing power and training for it to do something useful. In WBE we need to figure out how to scan brains at the right resolution, how to simulate brains at that resolution and have enough computing power to do it. In terms of understanding things, BCI requires understanding the individual neural representations (and probably for intelligence amplification, how to make them better or link them to AI-like external systems). AGI requires us to understand general intelligence well enough to build it (or set up a system that could get there by trial-and-error). For WBE we need to understand low-level neural function.

WBE is hard, but a different kind of hard from BCI and AGI. In BCI we need to make a biocompatible interface and then figure out how to signal with the brain - it requires us to gain a lot of experience with neural representations. These may be different from individual to individual. In AGI we need to figure out the algorithm necessary for open-ended intelligence, and then supply it with enough computing power and training for it to do something useful. In WBE we need to figure out how to scan brains at the right resolution, how to simulate brains at that resolution and have enough computing power to do it. In terms of understanding things, BCI requires understanding the individual neural representations (and probably for intelligence amplification, how to make them better or link them to AI-like external systems). AGI requires us to understand general intelligence well enough to build it (or set up a system that could get there by trial-and-error). For WBE we need to understand low-level neural function.

It seems to me that other technical considerations (biocompatible broadband neural interfaces, computing power, scanning methods) are quite solvable problems that, assuming funding for the area, will be solved with relatively little fuss. There are no fundamental physics or biology concerns that suggest they might be impossible (unless brains turn out to be run by quantum souls or something equally bizarre).

The big uncertainty will be which kind of understanding will be solved first and best: how brains represent high-level information, how minds think or how low-level neural function works. The last one is least glamorous but seems solvable using a lot of traditional neuroscience: we have much experience in pursuing it. It is a slow, cumbersome process that produces messy explanations, but it will eventually get where we need it. We have much less information about how brains represent information (although this is symbiotic with the low-level neuroscience, of course) and no real idea whether there is a simple neural code, a complex one, or (as Nicolelis suggested) none at all and it is all about mutually adapting systems. For AGI we have even less certainty about how to make progress: are current systems (beside improved power) smarter than past systems? It seems that we have made some progress in terms of robustness, learning ability etc, but we have no way of telling whether we are close or not to the goal.

So my prediction is that AGI could happen at nearly any time (if it happens), WBE will happen relatively reliably mid-century if nothing pre-empts it, and BCI will be slow in coming and developing. WBE could (as Hanson shows) produce a very rapid economic transition due to cheap brainpower, and so could non-superintelligent AGI. BCI is likely to be slow in changing the world. BCI and WBE are on the other hand symbiotic: both involve much of the same neuroscience groundwork, they may have overlapping funding sources and research communities, large scale neural simulations are useful both for developing both. If BCI or WBE manages to produce a good understanding of how the cortex does what it does (a general learning/thinking system that can adapt to a lot of different senses or bodies) that could produce bio-inspired AGI before BCI or WBE really transforms things.

The main concern transhumanists have with these technologies is to ensure friendliness. That is, the superintelligent entities created this way should not be too dangerous for the humans around them. Most efforts have aimed at doing this by looking at how to design hypothetical beings to be friendly. This naturally leads to the assumption that non-designed superintelligences are too risky, a popular position among AGI people. However, this may be a too narrow approach to friendliness: the reason people do not kill each other all the time is not just evolved social inhibitions, but a number of other factors: culturally transmitted values and rules, economic self-interest, reputations, legal enforcement and the threat of retaliation, to name a few. This creates a multi-layered defence. Each constraint is imperfect but reduces risk somewhat, and a being needs to fall through all of them in order to become dangerous.

The AGI people practically always seem to assume a hard take-off scenario where there is just a single rapidly improving intelligence and essentially no context limiting it. In this case the constraints will likely not be enough and the only chance of risk mitigation is friendly design (which would be subject to miscalculation) - or avoiding making an AGI in the first place (guaranteed to be safe). If we had serious reason to think AGI with hard take-off to be likely, the rational thing might be to prevent AGI development - possibly with coercive methods, since the risks would be too great.

The AGI people practically always seem to assume a hard take-off scenario where there is just a single rapidly improving intelligence and essentially no context limiting it. In this case the constraints will likely not be enough and the only chance of risk mitigation is friendly design (which would be subject to miscalculation) - or avoiding making an AGI in the first place (guaranteed to be safe). If we had serious reason to think AGI with hard take-off to be likely, the rational thing might be to prevent AGI development - possibly with coercive methods, since the risks would be too great.

BCI and WBE cannot guarantee friendly raw material, but the minds would be related to human minds. We already have a pretty good idea of how to motivate human-like entities to behave in a proper manner. Their development would also be relatively gradual. BCI would be especially gradual, since it would both have a long development time and remain limited by biological timescales. The fastest WBE breakthrough scenario would be a neuroscience breakthrough that enables already developed scanning and simulation methods to be run on cheap hardware. A more likely development would be that simulations are computer-limited and become larger and faster at the same rate as hardware. This gradual development would enmesh them in social, economic and legal ties. The smartest BCI-augmented person would likely not be significantly smarter or more powerful than the second and third smartest, and there would exist a continuum of enhancement between them and the rest of humanity. In the case of emulations the superintelligence would exist on the organisational level in the same way as corporations and nations outperform individuals, and it would be subject to the same kind of constraints and regulations as current collective entities. Hence, while we have no way of guaranteeing the friendliness of any of the entities in themselves but we have a high likelihood of having numerous kinds of constraints and motivators to keep them friendly.

If one's goal is to become superintelligent, BCI and WBE both seem to offer the chance (WBE would allow software upgrades not unlike BCI IA in due time). If one's goal is to reduce the risk of unfriendly entities, one should avoid AGI unless one believe in a soft take-off. If one's goal is to maximize the amount of intelligence as soon as possible, then one must weigh the relative likelihoods of these three approaches succeeding before each other and go for the quickest that extra effort can speed up.

April 24, 2008

Decrescendo a la Bruxelles

And continuing today's theme of apparently silly regulation: Brussels has managed to inadvertently regulate the style of future music. The legislation itself is not absurd, but I'm willing to bet the consequences will be. And it will speed up the automation of some orchestral jobs.

And continuing today's theme of apparently silly regulation: Brussels has managed to inadvertently regulate the style of future music. The legislation itself is not absurd, but I'm willing to bet the consequences will be. And it will speed up the automation of some orchestral jobs.

What I am curious about is how the noise regulations handle small, angry children. Will work safety experts step up and gag them - or forcibly remove kindergarten teachers? Or should people around kids wear earplugs? Will there be EU grants for noise cancellation equipment for nurseries? (so far most active noise control in this area has been for infant incubators) At least in 2005 the EU claimed it would be "reasonable" when dealing with nursery and school noise, but given EUs past record of reasonableness (especially with the above orchestral limitations) I expect we will see much silliness...

Don't think that they don't have feelings just 'cause a radish can't scream

On ethics in the news I blog about the Dignity of the Carrot - Swiss federal law now requires researchers experimenting on plants to consider the dignity of plants. To define this concept the Federal Ethics Committee on Non-Human Biotechnology has created a set of guidelines that serve to muddle things nicely by refusing to be normative, yet aiming for some kind of vitalist beneficence.

On ethics in the news I blog about the Dignity of the Carrot - Swiss federal law now requires researchers experimenting on plants to consider the dignity of plants. To define this concept the Federal Ethics Committee on Non-Human Biotechnology has created a set of guidelines that serve to muddle things nicely by refusing to be normative, yet aiming for some kind of vitalist beneficence.

The conclusion seems to be that we should respect plants as they are, we cannot truly own them, we should not hurt them unnecessarily and we shouldn't turn them into tools for our human desires. Tell that to Swiss agrobiotech companies, gardeners and vegans. And what to do about the Geneva flower clock?

The problem seems to be that plant dignity is largely cut loose from most social practice, the use of dignity in other domains and how our culture views human dignity. It hence becomes either an empty term, a moral purification for grant-writers and job-security for ethics experts, or a symbol for a particular romantic view of nature. But what about other views of nature? A garden is an interaction between human desires and designs and plant/animal adaptation, ideally something that enriches both. This kind of man-as-co-creator view does not seem well represented in the dignity guidelines, yet it is a valid position and can be defended using various ethical arguments. Can a proposal be rejected because it uses the wrong kind of plant dignity? Many ethical systems (such as many religious ones) rather clearly says that only humans possess dignity; if a proposal rejects plant dignity on confessional grounds, does rejecting the proposal imply religious discrimination?

I'm all for expanding circles of ethical concern, but that does not mean dignity is a good way of handling the value of nature. By using a concept strongly tied to sacrosanct human rights it also becomes inherently conservative.

But then again, I think we need more posthuman dignity - and if we are using dignity for plants, why not use a similar concept for enhancing plants? Wouldn't a Dyson tree have potential for great dignity?

April 23, 2008

Doom and Gloom in July

Just a little announcement: we will have a conference on Global Catastrophic Risks 17-20 July in Oxford. Leading experts on different risks will lecture about the state of current thinking on them. This includes nuclear terrorism, cosmic threats (supernova, comets and asteroids), the long term fate of the universe, pandemics, nanotechnology, ecological disasters, climate change, biotechnology and biosecurity, the cognitive biases associated with making judgements in the context of global catastrophic risk, social collapse, and the role of the insurance industry in mitigating and quantifying risk.

Just a little announcement: we will have a conference on Global Catastrophic Risks 17-20 July in Oxford. Leading experts on different risks will lecture about the state of current thinking on them. This includes nuclear terrorism, cosmic threats (supernova, comets and asteroids), the long term fate of the universe, pandemics, nanotechnology, ecological disasters, climate change, biotechnology and biosecurity, the cognitive biases associated with making judgements in the context of global catastrophic risk, social collapse, and the role of the insurance industry in mitigating and quantifying risk.

In short, lots of stimulating discussion on how to do something about Armageddon.

April 22, 2008

Homeoterrorism

Paul Kuliniewicz describes how to be a homeopathic bioterrorist (via respectful insolence).

Paul Kuliniewicz describes how to be a homeopathic bioterrorist (via respectful insolence).

Given that salt causes hypertension, I would go for diluting salt water until I had a deadly hypotension-causing mixture. Weaponize it by putting it in a sprayer, and you have a deadly weapon using just household ingredients!

Given my research interests, I'm more keen on making the ultimate cognition enhancer. Since alcohol causes people to behave stupidly, a homeopatic dilution of alcohol in water must make the drinker smarter. So dilute pure ethanol in water, shake (I mean succuss - homeopathy doesn't work if you don't use the right terminology: the water remembers insults!), put a drop of the mixture in more water, succuss, rinse, repeat until you have a 30C dilution. This is going to make you a genius! Muhahahaha!

More seriously (?) I wonder how many athletes use homeopathic doping. Given the prevalence of belief in homeopathy, that it is 100% certain not to be detectable and that (strangely) homeopathic remedies never have any negative side effects, I would suspect it is a widespread practice. What does WADA do about it? After all, it is just as corrosive to the spirit of sport as real doping, it is unfair that some people use it but not others and the placebo effect might even give an advantage.

I really wonder if people who believe in homeopathy ever dilute anything in their normal lives... oh, silly me, that kind of common sense experience doesn't apply to medicine! If there is something that annoys me it is compartmentalising one's life into domains that are mutually inconsistent and then refusing to even consider this to be a problem.

April 17, 2008

Free Will and Faster Computers

This week on Ethical Perspectives on the News: Who's this 'we', Dr Soon? Unconscious Action and Moral Responsibility. A quick take on the recent results showing unconscious processes preparing (and predicting) an action seconds before we become aware we do it. I argue against the claim it says something about our free will.

This week on Ethical Perspectives on the News: Who's this 'we', Dr Soon? Unconscious Action and Moral Responsibility. A quick take on the recent results showing unconscious processes preparing (and predicting) an action seconds before we become aware we do it. I argue against the claim it says something about our free will.

The first reason is that free will does not mean conscious free will - we could have free will but it could be independent of our consciousness. We are not just our conscious part but include our unconscious parts.

The second reason is that the experiment can not see anything we cannot see in ordinary life. When I chose A over B, could I have chosen differently? There is no way of actually seeing that counterfactual, since we only get one realisation at a time. We can repeat the experiment and choose differently, but that will not satisfy the people who think these variations were predetermined.

However, being of a compatibilist bent, I think it doesn't matter whether the universe is deterministic or not for free will, since free will is a phenomenon on the personal and social level, not on the fundamental physics level. I clearly can choose relatively freely in most situations, so empirically we have free will - not a perfect context-independent freedom, but the ability to make choices that are deeply unpredictable and based on complex internal states.

The real issue with the brain scanning findings is that they suggest we have surprisingly long-running actionin planning pipelines. That might be very interesting from an enhancement perspective. Imagine devices noticing likely future actions and preparing for them - if my computer loads files I need in a few seconds in the background, it will become subjectively much faster.

April 15, 2008

More LHC Philosophy, This Time with Demons

The debate continues on Ethical Perspectives on the News, now Toby Ord argues that These are not the probabilities you are looking for and Nicholas Shackel has Three arguments against turning the Large Hadron Collider on.

The debate continues on Ethical Perspectives on the News, now Toby Ord argues that These are not the probabilities you are looking for and Nicholas Shackel has Three arguments against turning the Large Hadron Collider on.

Toby's point is simple but important: most risk estimates are based on assumptions of known physics, which is after all what we want to test and extend into unknown domains. Hence such estimates are uncertain and not the right kind of risk estimates. On the other hand, it is not clear what kind of estimates would be the right kind.

Nick makes the argument that avoidable risks of destruction of all present and future goodness should not be taken, no matter how good their results could be. Unfortunately it is not entirely clear where this axiom comes from and what the limits to its applicability is. Since all actions have some (microscopic) risks of ending the world and in particular never tested actions and actions affecting the world globally reasonably have higher risks, it would seem to follow that I'm not allowed to even make this post, lest it cause some unlikely chain of events ending in doomsday.

I think the radical uncertainty problem of Toby is interesting: how are we supposed to act in such situations? When even arguing that we should not take any risks clearly has problems (as above) in the domain we know something about, it seems even more problematic in radically uncertain situations. In the end I think the real-world solution is just to muddle on, a bit cautiously (depending on the amount of newness, similarity to past risks and current theories) but largely based on past probability priors.

Maybe this can be viewed as a case of "Taleb's demon" (an invention by Peter Taylor, see this ppt). We have the usual urn with 10 black and 10 white balls. If I draw one, you can likely calculate the probability of me drawing a white one. But now Taleb's demon appear, and does something to the urn. What is the probability now? He could have removed balls or added extra white or black balls. Worse, he could have added red balls. Or frogs. The basis for normal probability theory is that we know the sample space, the space of possibilities. But here we are uncertain about even that.

The interesting thing is that it doesn't deter most people. We seem willing to extend our sample space based on scraps of information (before me mentioning frogs, you were probably still considering the urn as only holding balls) and experience - when I draw the concept of Democracy from the urn instead of a material object, you rapidly expand your sample space to cover at least all nouns. We also adjust our priors accordingly, most likely towards the "anything is possible" end of the spectrum if more outré examples come up.

I think we have a similar situation in the case of the LHC and risk. We know Taleb's demon has fudged with the urn, but until someone mentioned black holes that possibility was not on the agenda. The expansion of possibilities also makes us assign more probability/risk concern to events that we have no information about. If I start talking about the possibility of risk from self-replicating meson molecules people will start assigning risk to them automatically. Once a possibility is out of the bottle it cannot be put back in, and it gets assigned a risk level even when there is no information. Add a lot of possibility, and you add a lot of risk.

The only way of getting the risks under control is to make experiments. Drawing ten red, blue and white balls from the urn is not proof that it doesn't contain frogs or black holes, but it would calm us somewhat by reducing our priors. Similarly, the lack of disasters at the Brookhaven accelerator, the low supernova rate and the temporal location of Earth in the formation of solar systems in the galaxy should also be calming even if they are not conclusive evidence by any stretch. The Fermi Paradox on the other hand might be evidence that there is a problem somewhere.

Maybe the real answer to the Fermi paradox is that any sufficiently advanced civilization sees so many possibilities filled with risk that they end up hiding, not doing anything. It would surely fill Taleb's demon with glee.

April 12, 2008

Remember my paper

A new paper by me and Matthew Liao is out online, The Normativity of Memory Modification in Neuroethics.

A new paper by me and Matthew Liao is out online, The Normativity of Memory Modification in Neuroethics.

This paper is about the problems for morality that can occur when we edit our memories: if I erase a memory, may I become wrongly forgiving, if I add artificial memories, may I start living in a falsehood? Overall, we find that these modifications are not particularly troublesome as long as they do not harm others or oneself (this is the point where bioconservatives will mount their attack anyway) or we forget things we have an obligation to remember.

The real headache is of course the issues that are not strictly normative, like how we deal with each other having modified memories. On one hand we are already doing it (our memories are not very trustworthy and are surprisingly socially constructed), but as soon as inter-personal obligations and relations occur ethics always moves up one notch in complexity. Just recall the bisarre legal/social issues in John C. Wright's The Golden Age surrounding the memory editing and socially sanctioned self-censorship of the protagonist.

April 09, 2008

Enhancement: Yes, People Like It

A survey done by Aftonbladet in regards to my article showed 62.5% of the respondents thought we had a right to change our bodies.

A survey done by Aftonbladet in regards to my article showed 62.5% of the respondents thought we had a right to change our bodies.

Nature did an informal survey of reader's views on cognition enhancing drugs. About 80% thought enhancement of healthy people is OK and 69% thought mild side effects were acceptable. About 20% of the respondents had used metylphenidate, modafinil or beta blockers. No real age effect (which is odd or show that enhancement use is completely uncorrelated with stimulant use, which I doubt). About half experienced unpleasant side effects (and stopped using them for this reason), but there was no correlation with frequency of use. About a third were bought from the Internet, 52% was prescription drugs (and this demonstrates that as long as there are valid therapeutic uses there will be ways of getting them for people with social capital - banning overt enhancement will not affect this group much). People were understandbly less eager to allow children to use them, but thought there may be a pressure on parents to do so anyway.

Neither survey is very scientific, and we can expect hefty biases - especially the Nature result is biased towards international academics who have thought about cognition enhancement (for example because they use it). But I think they show that we are seeing a normalisation of it.

Nature also had a nice editorial Defining 'natural' about the ethical irrelevance of yuck reactions to the male pregnancy story (and the enhancers). It concludes:

"Ultimately, our visceral concept of what is 'natural' depends on what we are used to, and will continue to evolve as technology does."

So there you have it, morphological freedom is on the move in both Nature and Aftonbladet.

April 08, 2008

21st Century Virtues

On our practical ethics blog, I blog about The stresses of 24 hour creative work: How much would Aristotle blog? - about the stresses of for-pay blogging and other modern creative professions and how virtue ethics may be a solution. (blog, blog, blog...)

On our practical ethics blog, I blog about The stresses of 24 hour creative work: How much would Aristotle blog? - about the stresses of for-pay blogging and other modern creative professions and how virtue ethics may be a solution. (blog, blog, blog...)

Overall, we need to think about what new virtues we need in the 21st century. Here are some loose ideas:

- Equanimity in the face of choice - learning to enjoy the option we take without regretting all the other options, as well as recognizing when it may not matter much which option we choose.

- Temperance in the face of information flooding - this is linked with the ability to recognize when more information is not needed or useful. Another related ability is to select the right level of detail when thinking or modelling something.

- The ability to quit something deeply engaging when it is time - be it a computer game, blogging, programming, a drug habit or a job.

- Accurate estimation of how much something is worth to us - without being influenced by conventional views of its value.

- Recognizing cognitive biases affecting our judgement. Even when we cannot get rid of them we can exercise intellectual integrity by becoming more humble about the reliability of our own opinions.

- Treating risks and probabilities rationally.

- Maybe time-management skills ought to be recognized more clearly as virtues with a moral aspect and not just useful: we are wasting less of ours and other's time, energy and cognition.

April 07, 2008

Morphological Freedom in the Evening Sheet

I'm arguing for morphological freedom in the Swedish newspaper Aftonbladet: Det finns inte ett enda sätt att leva som skulle göra alla lyckliga.

I'm arguing for morphological freedom in the Swedish newspaper Aftonbladet: Det finns inte ett enda sätt att leva som skulle göra alla lyckliga.

Essentially it is my liberal argument that given the complexity of human life in general and the uniqueness of individual lives there is no standard method of making us happy, and hence we must give each other a great deal of freedom to live different lives and attempt different ways to our goals. This in turn implies that we need to control or own our bodies, and that we hence have a right to modify them. This is a negative right, and especially important in regards to being allowed not to modify ourselves when faced with outside pressure. This right becomes ever more important as our biotechnological power increases.

April 05, 2008

Taxi Drivers & Plagiarism

I normally do not read comments on newspaper sites, by Ord's Law online comments are usually an order of magnitude worse than the original post. But a comment to this story in the Swedish newspaper Svenska Dagbladet caught my eye. The story is nice fluff about the New York cab driver association's prizes for extraordinary service. It is also a direct translation of a SkyNews story - same content, same quotes, same structure. Googling for text from the SkyNews story finds around 40 pages, most which are other news sites crediting SkyNews. This version contains some identical text, but also new information - given that it is a New York newspaper it might even have been original journalism occurring there.

I normally do not read comments on newspaper sites, by Ord's Law online comments are usually an order of magnitude worse than the original post. But a comment to this story in the Swedish newspaper Svenska Dagbladet caught my eye. The story is nice fluff about the New York cab driver association's prizes for extraordinary service. It is also a direct translation of a SkyNews story - same content, same quotes, same structure. Googling for text from the SkyNews story finds around 40 pages, most which are other news sites crediting SkyNews. This version contains some identical text, but also new information - given that it is a New York newspaper it might even have been original journalism occurring there.

In an increasingly transparent world it is becoming harder to plagiarize, even when it is through translation. Of course, defining plagiarism becomes trickier. Every week I encounter dozens of nearly indistinguishable versions of a scientific finding, all close derivatives of university PR department press releases. Should that be regarded as plagiarism, since the journalists are not contributing much? If we accept it as plagiarism I would guess 90% of science reporting is plagiarism - plagiarism the "victim" promotes.

I doubt I can avoid copying - deliberately or not - elements of other information when I write. My experiments with fingerprinting political texts show that we quote/plagiarize each other quite often. But what I can do (beside trying to keep straight who said what originally) is to ensure that each post or text at least tries to bring together two or more previously unrelated pieces of information, creating a synthesis. Otherwise there would not be any point in having a new text. Maybe that is the real crime of plagiarism: not the copying, but simply not contributing anything new or better. The cab drivers had a prize for "going the extra mile", maybe everybody who writes stuff for a living out to have that too.

April 03, 2008

Realist Transhumanism

Yesterday I had the delight to spend an afternoon strolling and discussing AI and ethics with Roko Mijic. He has an interesting posting on his blog Transhuman Goodness: Transhumanism and the need for realist ethics (which was also subject of much of our discussion). Basically, he points out that transhumanism is all about reconsidering the human condition, including many of the things we value. But unless we have a good theory of what is valuable in itself even in a radically posthuman future, how could we convince anybody it is a good thing? He argues that this requires us to be morally realist.

Yesterday I had the delight to spend an afternoon strolling and discussing AI and ethics with Roko Mijic. He has an interesting posting on his blog Transhuman Goodness: Transhumanism and the need for realist ethics (which was also subject of much of our discussion). Basically, he points out that transhumanism is all about reconsidering the human condition, including many of the things we value. But unless we have a good theory of what is valuable in itself even in a radically posthuman future, how could we convince anybody it is a good thing? He argues that this requires us to be morally realist.

I'm not entirely convinced. But I think he points out a big hole in contemporary transhumanist discourse. The attempts at building philosophical systems of the early Extropians were replaced by general technophilia in the mid-90's, and today we are waist-deep in biopolitics. The *why* of transhumanism is pretty important if we want to go beyond "because I want it!"

I personally think once can defend transhumanism simply on the grounds of individual freedom: different people have different life projects and different things that make them happy, we cannot easily figure out a priori how to live a good life suited for ourselves, so hence we need to experiment and learn. This requires us to respect other people's right to experiment and learn, and this forms the basis for a number of freedoms. Transhumanism could be defended simply as the right to explore the posthuman realm.

This appeals to libertarians like me and it does not require moral realism. But clearly many think this account is "anaemic". Transhumanism is about seeking something good, not just that we are allowed to dip our toes in the pool if we want to. And clearly we need to have some concept of good and bad outcomes in order to judge whether e.g. evolutionary traps (like becoming cosmic locusts or non-conscious economic actors) are worth avoiding.

I think one clear value is open-endedness. Existential risks are about the end or fundamental limitation of the human (or posthuman) species. Being dominated by superintelligence (however benign) seems worse than not being dominated. The above liberal rights argument is based on human desires and lives being potentially open-ended; if there were a clear optimum we should just implement it.

I also have a very strong intuition that complexity has value. I want to make the universe more complex, more interesting than it was before. This involves creating more observers and actors, more complex systems that both can develop unpredictably but also retain path dependence: it is in the contingent selection of pathways that decisions gain value and systems become unique (i.e. they can never be repeated by re-running the outside system). That requires not just a lot of very transhuman technologies to awake a lot of complacent matter into dynamic life, but also open-ended processes. If a system can change state between a finite set of possibilities it will be fundamentally limited and the total amount of complexity will be bounded. If the state space can be extended the complexity can grow. (of course, defining a good complexity measure seems to be a fool's errand)

I would nominate open-endedness as a very fundamental transhumanist value. Avoiding foreclosing options, avoiding avenues that would lead to irreversible entrapment, maximising the options for growth. It is the essence of sustainability, not in the narrow green-ideological sense it is commonly used but as an actual aim to view reality as an infinite game. Maybe this is again just an instrumental value to achieve other things, but even as an instrument it does seem to produce a lot of ethical effects. It also opens up some curious issues, like when we ought to close down options that lead to non-open consequences: do they have to be very risky, or is it enough that they are limited?