April 28, 2008

BCI, AGI & WBE

Accelerating Future » Japanese BCI Research Enters the Skull discusses the possibility of intelligence amplification using brain-computer interfacing (BCI). Michael Anissimov thinks this is much harder than creating artificial general intelligence (AGI) and worries that it will lure people from AGI to BCI because it may help us rather than some constructed mind children. Roko takes up the thread in Artificial Intelligence vs. Brain-computer interfaces and claims that AGI is the easier and safer path to superintelligence.

Accelerating Future » Japanese BCI Research Enters the Skull discusses the possibility of intelligence amplification using brain-computer interfacing (BCI). Michael Anissimov thinks this is much harder than creating artificial general intelligence (AGI) and worries that it will lure people from AGI to BCI because it may help us rather than some constructed mind children. Roko takes up the thread in Artificial Intelligence vs. Brain-computer interfaces and claims that AGI is the easier and safer path to superintelligence.

I think both of my friends are missing the point: BCI and AGI aim at different things, use different resources and are competing with another acronym, WBE.

Roko argues that the brain will be a mess to connect to; no quarrel with that. But the scope of the problem is relatively limited: BCI is currently all about adding extra interfaces, essentially new I/O abilities. This is what it will be about for a very long time. If it develops well we will first get working I/O so that blind and deaf people can see and hear reasonably well, that paralysed people can move and neuroscientists can get some interesting raw data to think about. Later on the bandwidth and fidelity will increase until all sensory and motor signals can be intercepted and replaced. Beyond that interfaces would enable interfacing between other brain regions and computers, enabling information exchange at higher levels of abstraction. It is first at this point where serious intelligence amplification even begins to be possible. The previous stages might enable quick user interfaces for smart external software, but they would hardly be orders of magnitude better than using external screens, voice recognition or keyboards.

The community developing BCI does not appreciably overlap with the artificial intelligence community except perhaps for a few computational neuroscientists. In fact, the context for BCI development involves medical engineering, the legal, corporate and economic context of hi-tech medicine and much work on implant engineering and signal processing. Meanwhile AGI is a subset of AI, and exists within a software-focused community funded in entirely different ways. It is pretty unlikely these fields compete for money, brains or academic space. The only place where they compete is in transhumanist attention.

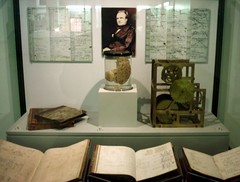

However, there is a third way towards superintelligence. That is building on whole brain emulation (WBE). WBE is based on scanning a suitable brain at the right resolution, then running an emulation of the neural network (or whatever abstraction level works). Leaving aside issues of personal continuity and philosophy of mind, if WBE succeeds in making a software emulation of a particular human brain, we would stand at the threshold of a superintelligence transition very different from the super-smart AI envisioned in AGI or the augmented cyborg of BCI. The reason, as Robin Hanson pointed out, is that now we have copyable human capital. The possibility of getting a lot of brainpower cheaply changes things enormously (even a fairly expensive computer is cheaper than the cost of educating a person for a skilled job like, say, lawyer or nuclear physicist). While individual emulated minds are not smarter than the original, the possibility of running large (potentially very large) teams of the best people for the task can allow them to solve many super-hard problems (see forthcoming paper by me and Toby Ord on that).

WBE is hard, but a different kind of hard from BCI and AGI. In BCI we need to make a biocompatible interface and then figure out how to signal with the brain - it requires us to gain a lot of experience with neural representations. These may be different from individual to individual. In AGI we need to figure out the algorithm necessary for open-ended intelligence, and then supply it with enough computing power and training for it to do something useful. In WBE we need to figure out how to scan brains at the right resolution, how to simulate brains at that resolution and have enough computing power to do it. In terms of understanding things, BCI requires understanding the individual neural representations (and probably for intelligence amplification, how to make them better or link them to AI-like external systems). AGI requires us to understand general intelligence well enough to build it (or set up a system that could get there by trial-and-error). For WBE we need to understand low-level neural function.

WBE is hard, but a different kind of hard from BCI and AGI. In BCI we need to make a biocompatible interface and then figure out how to signal with the brain - it requires us to gain a lot of experience with neural representations. These may be different from individual to individual. In AGI we need to figure out the algorithm necessary for open-ended intelligence, and then supply it with enough computing power and training for it to do something useful. In WBE we need to figure out how to scan brains at the right resolution, how to simulate brains at that resolution and have enough computing power to do it. In terms of understanding things, BCI requires understanding the individual neural representations (and probably for intelligence amplification, how to make them better or link them to AI-like external systems). AGI requires us to understand general intelligence well enough to build it (or set up a system that could get there by trial-and-error). For WBE we need to understand low-level neural function.

It seems to me that other technical considerations (biocompatible broadband neural interfaces, computing power, scanning methods) are quite solvable problems that, assuming funding for the area, will be solved with relatively little fuss. There are no fundamental physics or biology concerns that suggest they might be impossible (unless brains turn out to be run by quantum souls or something equally bizarre).

The big uncertainty will be which kind of understanding will be solved first and best: how brains represent high-level information, how minds think or how low-level neural function works. The last one is least glamorous but seems solvable using a lot of traditional neuroscience: we have much experience in pursuing it. It is a slow, cumbersome process that produces messy explanations, but it will eventually get where we need it. We have much less information about how brains represent information (although this is symbiotic with the low-level neuroscience, of course) and no real idea whether there is a simple neural code, a complex one, or (as Nicolelis suggested) none at all and it is all about mutually adapting systems. For AGI we have even less certainty about how to make progress: are current systems (beside improved power) smarter than past systems? It seems that we have made some progress in terms of robustness, learning ability etc, but we have no way of telling whether we are close or not to the goal.

So my prediction is that AGI could happen at nearly any time (if it happens), WBE will happen relatively reliably mid-century if nothing pre-empts it, and BCI will be slow in coming and developing. WBE could (as Hanson shows) produce a very rapid economic transition due to cheap brainpower, and so could non-superintelligent AGI. BCI is likely to be slow in changing the world. BCI and WBE are on the other hand symbiotic: both involve much of the same neuroscience groundwork, they may have overlapping funding sources and research communities, large scale neural simulations are useful both for developing both. If BCI or WBE manages to produce a good understanding of how the cortex does what it does (a general learning/thinking system that can adapt to a lot of different senses or bodies) that could produce bio-inspired AGI before BCI or WBE really transforms things.

The main concern transhumanists have with these technologies is to ensure friendliness. That is, the superintelligent entities created this way should not be too dangerous for the humans around them. Most efforts have aimed at doing this by looking at how to design hypothetical beings to be friendly. This naturally leads to the assumption that non-designed superintelligences are too risky, a popular position among AGI people. However, this may be a too narrow approach to friendliness: the reason people do not kill each other all the time is not just evolved social inhibitions, but a number of other factors: culturally transmitted values and rules, economic self-interest, reputations, legal enforcement and the threat of retaliation, to name a few. This creates a multi-layered defence. Each constraint is imperfect but reduces risk somewhat, and a being needs to fall through all of them in order to become dangerous.

The AGI people practically always seem to assume a hard take-off scenario where there is just a single rapidly improving intelligence and essentially no context limiting it. In this case the constraints will likely not be enough and the only chance of risk mitigation is friendly design (which would be subject to miscalculation) - or avoiding making an AGI in the first place (guaranteed to be safe). If we had serious reason to think AGI with hard take-off to be likely, the rational thing might be to prevent AGI development - possibly with coercive methods, since the risks would be too great.

The AGI people practically always seem to assume a hard take-off scenario where there is just a single rapidly improving intelligence and essentially no context limiting it. In this case the constraints will likely not be enough and the only chance of risk mitigation is friendly design (which would be subject to miscalculation) - or avoiding making an AGI in the first place (guaranteed to be safe). If we had serious reason to think AGI with hard take-off to be likely, the rational thing might be to prevent AGI development - possibly with coercive methods, since the risks would be too great.

BCI and WBE cannot guarantee friendly raw material, but the minds would be related to human minds. We already have a pretty good idea of how to motivate human-like entities to behave in a proper manner. Their development would also be relatively gradual. BCI would be especially gradual, since it would both have a long development time and remain limited by biological timescales. The fastest WBE breakthrough scenario would be a neuroscience breakthrough that enables already developed scanning and simulation methods to be run on cheap hardware. A more likely development would be that simulations are computer-limited and become larger and faster at the same rate as hardware. This gradual development would enmesh them in social, economic and legal ties. The smartest BCI-augmented person would likely not be significantly smarter or more powerful than the second and third smartest, and there would exist a continuum of enhancement between them and the rest of humanity. In the case of emulations the superintelligence would exist on the organisational level in the same way as corporations and nations outperform individuals, and it would be subject to the same kind of constraints and regulations as current collective entities. Hence, while we have no way of guaranteeing the friendliness of any of the entities in themselves but we have a high likelihood of having numerous kinds of constraints and motivators to keep them friendly.

If one's goal is to become superintelligent, BCI and WBE both seem to offer the chance (WBE would allow software upgrades not unlike BCI IA in due time). If one's goal is to reduce the risk of unfriendly entities, one should avoid AGI unless one believe in a soft take-off. If one's goal is to maximize the amount of intelligence as soon as possible, then one must weigh the relative likelihoods of these three approaches succeeding before each other and go for the quickest that extra effort can speed up.

Posted by Anders3 at April 28, 2008 09:20 PM