April 03, 2008

Realist Transhumanism

Yesterday I had the delight to spend an afternoon strolling and discussing AI and ethics with Roko Mijic. He has an interesting posting on his blog Transhuman Goodness: Transhumanism and the need for realist ethics (which was also subject of much of our discussion). Basically, he points out that transhumanism is all about reconsidering the human condition, including many of the things we value. But unless we have a good theory of what is valuable in itself even in a radically posthuman future, how could we convince anybody it is a good thing? He argues that this requires us to be morally realist.

Yesterday I had the delight to spend an afternoon strolling and discussing AI and ethics with Roko Mijic. He has an interesting posting on his blog Transhuman Goodness: Transhumanism and the need for realist ethics (which was also subject of much of our discussion). Basically, he points out that transhumanism is all about reconsidering the human condition, including many of the things we value. But unless we have a good theory of what is valuable in itself even in a radically posthuman future, how could we convince anybody it is a good thing? He argues that this requires us to be morally realist.

I'm not entirely convinced. But I think he points out a big hole in contemporary transhumanist discourse. The attempts at building philosophical systems of the early Extropians were replaced by general technophilia in the mid-90's, and today we are waist-deep in biopolitics. The *why* of transhumanism is pretty important if we want to go beyond "because I want it!"

I personally think once can defend transhumanism simply on the grounds of individual freedom: different people have different life projects and different things that make them happy, we cannot easily figure out a priori how to live a good life suited for ourselves, so hence we need to experiment and learn. This requires us to respect other people's right to experiment and learn, and this forms the basis for a number of freedoms. Transhumanism could be defended simply as the right to explore the posthuman realm.

This appeals to libertarians like me and it does not require moral realism. But clearly many think this account is "anaemic". Transhumanism is about seeking something good, not just that we are allowed to dip our toes in the pool if we want to. And clearly we need to have some concept of good and bad outcomes in order to judge whether e.g. evolutionary traps (like becoming cosmic locusts or non-conscious economic actors) are worth avoiding.

I think one clear value is open-endedness. Existential risks are about the end or fundamental limitation of the human (or posthuman) species. Being dominated by superintelligence (however benign) seems worse than not being dominated. The above liberal rights argument is based on human desires and lives being potentially open-ended; if there were a clear optimum we should just implement it.

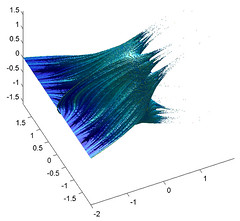

I also have a very strong intuition that complexity has value. I want to make the universe more complex, more interesting than it was before. This involves creating more observers and actors, more complex systems that both can develop unpredictably but also retain path dependence: it is in the contingent selection of pathways that decisions gain value and systems become unique (i.e. they can never be repeated by re-running the outside system). That requires not just a lot of very transhuman technologies to awake a lot of complacent matter into dynamic life, but also open-ended processes. If a system can change state between a finite set of possibilities it will be fundamentally limited and the total amount of complexity will be bounded. If the state space can be extended the complexity can grow. (of course, defining a good complexity measure seems to be a fool's errand)

I would nominate open-endedness as a very fundamental transhumanist value. Avoiding foreclosing options, avoiding avenues that would lead to irreversible entrapment, maximising the options for growth. It is the essence of sustainability, not in the narrow green-ideological sense it is commonly used but as an actual aim to view reality as an infinite game. Maybe this is again just an instrumental value to achieve other things, but even as an instrument it does seem to produce a lot of ethical effects. It also opens up some curious issues, like when we ought to close down options that lead to non-open consequences: do they have to be very risky, or is it enough that they are limited?

Posted by Anders3 at April 3, 2008 05:25 PM