September 30, 2007

GIGO Adaptation

Projecting Heat-Related Mortality Impacts Under a Changing Climate in the New York City Region -- Knowlton et al., 10.2105/AJPH.2006.102947 American Journal of Public Health analyses the effect of climate change on heat-related premature mortality. They get increases in the 2050s between 47% to 95%, with a mean 70% increase compared with the 1990s, and a decrease of these estimates by 25% due to acclimatization (i.e. being used to it, more use of air conditioning).

Projecting Heat-Related Mortality Impacts Under a Changing Climate in the New York City Region -- Knowlton et al., 10.2105/AJPH.2006.102947 American Journal of Public Health analyses the effect of climate change on heat-related premature mortality. They get increases in the 2050s between 47% to 95%, with a mean 70% increase compared with the 1990s, and a decrease of these estimates by 25% due to acclimatization (i.e. being used to it, more use of air conditioning).

What is wrong with this picture?

Leaving aside the uncertaintities of the climate model, the authors make use of current data for the population health response to heat, and use current data from cities with similar climate as the 2050 New York to estimate the effect of acclimatization.

What I'd like to see is a validation of this method by using 1963 US data on mortality and air conditioning use in today's climate. In 1963 domestic air conditioning had just recently become affordable and was still a middle class status symbol, the death rate across the population was 17% higher and the (real, inflation adjusted) GDP per capita was just $15036 compared to $37807 in 2006. Does anybody imagine it would have been even remotely right?

Assuming that the economic trends continue we should have a 250% richer population in 2050, air conditioning would have had the time to become cheaper and more widespread, the demographic structure would have become completely different and in particular, if heat-related mortality is viewed as a problem by the future people, they would also have worked on solving it. While building a model on the real data of today is far better than just making something up, it still has serious problems extrapolating this far. They claim:

Although considerable uncertainty exists in climate forecasts and future health vulnerability, the range of projections we developed suggests that by midcentury, acclimatization may not completely mitigate the effects of climate change in the New York City metropolitan region, which would result in an overall net increase in heat-related premature mortality.

What they have actually shown is that 2007 acclimatization would not mitigate a possible 2050 weather. Which is a bit like arguing that 1963 car safety efforts would be unable to mitigate traffic deaths in 2007 very much.

Both this study and an earlier one seem to make use of weather-based mortality data averaged from 1964-1991. This means that any change over time in this 30 year interval gets removed. it would be far more interesting to extract this change and try to extrapolate it, even if the extrapolation would introduce far more uncertainty in the final numbers. Better a broad confidence interval that tells us something true but uncertain than a narrow interval we can be certain is not true.

While no doubt a changed climate can bring nasty problems, one should be very careful about extrapolating from the present. At the very least one should check for things like Garbage in, garbage out in any model. But running things through a computer and especially invoking climate change seems to excuse anything.

Prolegomena to a Page of Great Beards of Science

Faculty of Philosophy: The Amoral Sciences Club has a page about great beards of philosophy. Somebody better make a page for the great beards of science. Here are some initial suggestions.

The philosopher page claims Charles Darwin, but honestly he was more of a biologist than a philosopher.

Dmitri Mendeleev has always in my mind been the top contender for the real beard of science. Russian, flowing, elemental.

Ivan pavlov had a neatly conditioned beard.

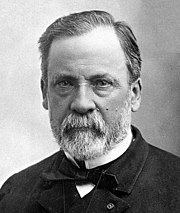

Louis Pasteur had a similar neat little beard. Not much space there for any microbiology.

Wilhelm Röntgen on the other hand had a very opaque beard.

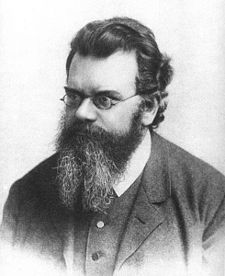

Ludwig Boltzmann had a wilder beard that could only be described statistically.

August Erlenmeyer of course had a conical beard.

Antoine Becquerel had a radiant beard.

James Clerk Maxwell on the other hand had a more wavy beard.

ibn al-Haytham (Alhazen), al-Khwarizimi and the other islamic golden age scientists (plus all the greek scientists) likely had impressive beards, but exact records do not seem to have survived.

Conversely, if Aubrey de Grey gets SENS to work we will see his beard for a long time.

September 28, 2007

Saving the World

Bob the Angry Flower - Planet in Peril explains exactly how to deal with climate change.

September 25, 2007

Graffiti Archaeology

Graffiti archaeology (flash) is an interesting piece of information/art visualisation. It allows the viewer to select a spot and move through time to see how different pieces of graffiti appear on top of each other. It is fascinating both to see the change of styles and surroundings, but also the interactions between the pieces of graffiti.

Graffiti archaeology (flash) is an interesting piece of information/art visualisation. It allows the viewer to select a spot and move through time to see how different pieces of graffiti appear on top of each other. It is fascinating both to see the change of styles and surroundings, but also the interactions between the pieces of graffiti.

It reminds me of Grant Schindler's work on inferring temporal order of images. While the archaeology site is clearly based on enthusiasts carefully pooling and distorting pictures to fit. Schinder's approach is more suited for creating the same kind of result automatically. I wonder if there aren't a lot of automated camera footage out there just waiting for this kind of indexing, allowing the production of interactive 4D models of our environment over long periods of time.

September 21, 2007

Responsible Provocations

As a Scandinavian, I feel like I almost have a duty to start a webcomic dealing with religion. Of course, it is pretty hard to beat Jesus and Mo.

As a Scandinavian, I feel like I almost have a duty to start a webcomic dealing with religion. Of course, it is pretty hard to beat Jesus and Mo.

The ongoing Lars Vilks Muhammad drawings controversy has led the former foreign minister Pierre Schori to argue that Vilks is not extending freedom of thought (link in Swedish). Duh. He thinks Vilks ought to have shown some respect for the heartfelt views of others, since in a globalised world even a local statement can be heard far and cause reactions. Duh again.

Freedom of speech, thought and religion are much more profound than Schori seems to think. He seems to think such things are mainly relevant in the battle against tyrants and fanatics in remote countries, not at home. That provocations like these caricatures make it harder for moderates work in the Middle East is not enough to limit the right or even desirability of provocation. If one wants to promote peace it might be rational to avoid provocation, but Vilks entire career has been about producing provocations that occasionally had repercussions beyond the artwork itself (like the waste of bureaucracy caused by Ladonia). In fact, we should be deeply concerned about what he will have to do next time, since clearly he has to escalate.

Schori's problem is that he is thinking in terms of social responsibility, not moral responsibility. If freedom of speech is viewed as a moral matter, then we have a moral obligation not to interfere with the speech of others, yet we may praise or blame them morally for what they said and morally criticise people interfering in their speech. Moral responsibility is close to absolute. If freedom of speech is instead merely a social matter, then interfering with speech may be socially justified. This is problematic, and the same issue of the newspaper gives a good example.

It reports that the Thai government now demands that YouTube stops clips that can cause "general confusion and threaten national security", that is, they accuse the former premier Prem Tinsulanonda to have been the brains behind the military coup last year. This is a valid example of socially justified interference with free speech. It is just that most outsiders would regard the justification as terribly weak and that Thailand would likely be better off having an open discussion about the issue.

But assume the government was right about the claims hurting social order. Might they not still be relevant? Sometimes truths that hurt both the feeling of people and the stability of a society must be heard. Revealing corruption, tyrrany or just hidden biases has produced a significant amount of unrest. If the suffragettes and later the students in Prague 1968 had just stayed home a lot of trouble could have been avoided. Extremely unpopular views, whether about the orbits of the planets, human evolution or the subconscious, have transformed our civilization for the better. Should we have demanded of Darwin and Freud that they ought to have revealed their ideas responsibly, in order to not upset anybody?

The reason we need freedom of speech defended as a moral right and not just as a social right is that 1) social rights are based on the current social situation, and 2) we have problems distinguishing provocations with real content from those without. Upsetting the social order is sometimes worthwhile, making acting in a "socially responsible way" irresponsible in a larger scheme of things where possible future states of society are factored in. But the only way of finding out is to have a broad epistemic process - many people thinking and debating - analyse the ideas. That requires the freedom in the first place.

The second reason is the classic Locke argument and hardly needs any comment. Except that Vilk might be a good example: most of his work has been IMHO rather empty provocations. Yet the current controversy does bring out a lot of interesting responses and might actually be a relevant provocation: how little do you have to do to get a price on your head? Showing that in the global village everybody will hear you when you insult their favorite holy cow, that is actually a relevant use of free speech.

Clearly, if we are to be "responsible" in the social sense we should avoid upsetting anybody (Except those it is socially allowed to attack: saying something nasty about George W Bush is a guaranteed crowd pleaser at european scientific conferences, especially when said by an American - yet saying the exact same things about other people would be regarded as embarrasing and irresponsible). This form of responsibility has enormous chilling effects. No jokes about religion or politics, and for heaven's sake don't mention the War! The emperor's clothing would remain unassailed.

If we are responsible in the moral sense instead, we will try to tell the truths we think are important. Not because we wish to upset people, but because we think the ideas are important for others to hear. We might be wrong about that (thousands of conspiracy theorists and creationists blather endlessly all the time). But it is better than self-censorship, and we are free to call bullshit bullshit. We might do it politely, we might do it bluntly - the better communicators will win.

September 19, 2007

Keeping Journalists Honest

This week on CNE I blog about competing interests among journalists, as brought up by Ben goldacre.

This week on CNE I blog about competing interests among journalists, as brought up by Ben goldacre.

My own strongest demand to science journalists is simple: give literature references or at least a link to the paper you are basing the story on! The vast majority of articles at most give a passing reference to an author of a study and maybe a university, but that is it. Sometimes one gets a reference like "in this month's journal name" that often turn out to be erroneous.It makes it hard to actually check the paper for the interested reader, and contributes to the impression that most such articles are written straight from the university PR department's press release. The excuse that there might not be a room in the paper version might be true, but there is absolutely none for web versions of newspapers.

My admonition of course also extends to university PR departments: press releases should of course have references! Leaving them out just because journalists may leave them out is no argument; they do not cost anything, it is unlikely the journalists will be turned away by seeing it and it would help the university's credibility too.

September 18, 2007

Stop the Memory Hole

Wired writes about how YouTube Bans Anti-Creationist Group Following DMCA Claim. This ought to become an example of the chilling effects of DMCA and similar recent laws.

Wired writes about how YouTube Bans Anti-Creationist Group Following DMCA Claim. This ought to become an example of the chilling effects of DMCA and similar recent laws.

Basically a creationist group sent a DMCA request to YouTube after the Rational Response Squad picked apart a few of their claims. This in itself is nothing new, the Foresight Institute got into a hot discussion with Scientific American back in the days when they wrote a line-by-line rebuttal of an anti-nanotech article. But in this case not only fair use would apply, but also claim at the creationist website that none of the materials was copyrighted since they wanted them spread (but not criticized).

Except that that no-copyright clause just went down the memory hole - after people reacted to this inconsistency. A YouTube employee looking today would get a very different view than the true state.

In this case this will hardly matter. It is too little too late, and will likely add fuel to the fire. But had this not been a cause celebree among atheist bloggers, who had stored files proving the change? It is already very easy to get a website or movie clip removed by claiming copyright regardless of actual merit. Often the decision is made by people with no schooling in IP law and few resources to examine the claim. This makes copyright claims very effective means of stifling criticism. Given the transient and changeable nature of the web it does not seem too hard to retroactively shore up false or anti-fair use claims.

The lesson of this is clear: we need better wayback machines but also to store what we browse and use individually. Ideally this stored material should be hard to manipulate, making it better evidence in a court case. Given the growth of storage space it is not too absurd to have a cache that stores everything, possibly timestamped and authentificated using trusted computing (hey! it can be used for at least *one* other benign purpose beside checking that P2P or internet gambling client software is not forged :-) Then we need to figure out how to make the response to takedown claims rational. That is going to be much harder.

September 14, 2007

Intellectual Integrity

The BBC mentions that Sir David King has proposed a scientific profession ethics code (via Adventures in Ethics and Science). The code has seven principles:

The BBC mentions that Sir David King has proposed a scientific profession ethics code (via Adventures in Ethics and Science). The code has seven principles:

- Act with skill and care, keep skills up to date

- Prevent corrupt practice and declare conflicts of interest

- Respect and acknowledge the work of other scientists

- Ensure that research is justified and lawful

- Minimise impacts on people, animals and the environment

- Discuss issues science raises for society

- Do not mislead; present evidence honestly

Nice, but how is that different from the normal values of science?

Maybe the mistake is to claim this ought to be a professional code. Maybe we should instead declare this to be a universal human code of conduct for intellectual integrity. Media ought to follow it. These are values the public should try to act according to, when discussing science and what it ought to do. All these points are points about intellectual honesty we ought to tell children about.

Man's unfailing capacity to believe what he prefers to be true rather than what the evidence shows to be likely and possible has always astounded me. We long for a caring Universe which will save us from our childish mistakes, and in the face of mountains of evidence to the contrary we will pin all our hopes on the slimmest of doubts. God has not been proven not to exist, therefore he must exist.

-- Academician Prokhor Zakharov, "For I Have Tasted The Fruit"

September 13, 2007

Causes of Death

A while ago I saw the diagram of National Geographic on causes of death as tbulated by NSC. While cool as data, as a visualisation it was pretty bad, as discussed here and here. Some people .

A while ago I saw the diagram of National Geographic on causes of death as tbulated by NSC. While cool as data, as a visualisation it was pretty bad, as discussed here and here. Some people .

Here is my own take on it, based on hierarchical pie diagrams (pdf file) (I took the data from here, which incidentally shows how well information can be displayed just through a table). I have attempted to make it area-based: the annuli become thinner as they move outward to keep the total area constant.

This approach emphasizes the big areas we ought to worry about - traffic accidents, falls, assaults, accidental poisoning and suicide. Small risks like radiation are invisible. When talking about mortality people spend far too much time worrying about small exotic risks and far too little worrying about everyday risks.

I wonder about the possible serious underrepresentation of iatrogenic deaths here. Likely they are nearly all classified as due to disease when they ought to be in here. If added, they would make the last segment much larger than just 2,843 people.

Abstract Network

During some easy-to-follow talks at SENS 3 I wrote a small program to find similarities between the abstracts at the conference. The result is this graph (pdf).

During some easy-to-follow talks at SENS 3 I wrote a small program to find similarities between the abstracts at the conference. The result is this graph (pdf).

The basic approach is to add a connection between all authors using the same word. The strength of the connection is 1/k2 where k is the number of authors - common words just add weak connections, distinct similarities produce strong links. People sharing abstracts of course end up strongly linked, but we get subject groups due to text similarities too.

After thresholding the graph I ran Newman and Girvan's community structure algorithm in yEd to cluster the network. The result is pretty nice, showing the big central theme network in the upper left and the "Italian gang" in the lower right, as well as smaller topics and coauthor groups.

I did not use word frequencies here, but an obvious possible improvement would be to use mutual information components like log(P(word,person)/P(word)P(person)) and two-word patterns for higher specificity.

September 12, 2007

Study Bias Everywhere

This week's CNE Health blog is about the War on Study Bias. Scott Gottleib warns against government biases in medical cost-effectiveness studies, adding to the complexity of dealing with funding bias. I find it interesting that people are much more ready to attribute bias to corporate interests than to government interests, as if being labeled a government organisation suddenly made its members and overall behavior completely unselfish and unbiased. Maybe it is the ease with which we can come up with selfish, minimum-research explanations of corporate behavior (it wants to make money) that makes government organisations look neutral.

September 11, 2007

Trans-Rodentism

A Thinking Apes Critique of Trans-Simianism by Dresden Codak deserves to become a transhumanist classic. It is a through rebuttal to the curious notions that in the future within a single generation we might develop one or possibly even two new ideas.

A Thinking Apes Critique of Trans-Simianism by Dresden Codak deserves to become a transhumanist classic. It is a through rebuttal to the curious notions that in the future within a single generation we might develop one or possibly even two new ideas.

On the other hand we have the transrodents:

Professor Sqiiik of the Neuroscience Department of University of Cambridge recently suggested an unusual explanation for the genetic instability trend befalling rodent populations worldwide. According to him:

"We know that a large number of mutations are occuring these days, creating divergent families of rodents. Some can no doubt be explained by random mutations or environmental factors such as contaminated cheese. Dr Piiki from Oxford has suggested that we are seeing a case of genomic instability emerging. However, this theory has a hard time explaining why it occurs worldwide, at the same time and in such a patchy manner. We know that rats living near each other tend to develop linked mutations - rats living in the neuroscience department tend to get brain-related mutations, rats in the dermatology department get skin-related mutations while the genetics department produces mutations of all kinds.

I will stick my whiskers out and argue that we are seeing intelligent design. That is, there is a force out there that causes these mutations according to some plan. I have several pieces of evidence in favor of this admittedly strange conclusion.

The first one is the unlikelieness of many mutations. While we often see loss of function in the sufferers of "knock-out syndrome", sometimes we see not only gain of new functions. Recently several cases of familial fluorescence has been discovered, involving a gene that seems to have jumped from jellyfish to our species! There is ample evidence that genes from other species have appeared, including human genes, fly genes and bacterial genes.

But many of these genetic changes are not merely exceedingly unlikely, they are also strangely beneficial. We have evidence of mice developing extended lifespan, resistance to cancer, resistance to obesity, better memories, increased musculature and regeneration, color vision and a long list of other gains. While the majority of mutations produce tragic losses, the number of exceedingly unlikely beneficial mutations is far above what mere randomness could explain. They are also becoming more and more common. Even more telling, we see non-genetic complex changes such as the development of ears on the back, which clearly cannot be explained using genetic instability.

A parsimonious explanation would be a designer (or designers) aiming at enhancing our species. A perfect designer would of course instantly make us immortal, strong, smart and healthy, but imagine a weak and fallible designer who does not understand rodent biology. Such a being would be performing trial- and-error experimentation, trying to learn what works. If there are several such beings they may even specialise, explaining the differences between different places.

An obvious criticism is that if the designer is trying to help us, why is it causing so much pain and suffering? One possibility is of course that the designer is not truly helping us but merely attempts to understand biology in general, maybe aiming to help some other species like (say) humans. But a pro-human designer would clearly insert discoveries already made in rodents, and we see no evidence that humans have become smarter or even fluorescent.

I instead believe we are being tested. The designer seeks to help us in its inefficient, fumbling way, but in the process it also wants to learn what it truly means to be a rodent."

September 01, 2007

Nervous About Resizing

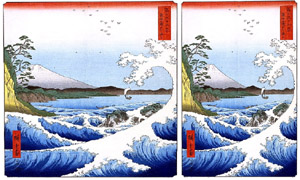

Communication Nation posts about a New technology for smart image resizing, developed by Shai Avidan and Ariel Shamir (Seam carving for content-aware image resizing, ACM Transactions on Graphics Volume 26 , Issue 3 (July 2007)) (here is a free pdf). The idea is to not just squeeze or crop the image as it is resized to fit (say) a browser window, but to remove less informative parts so that important objects and people remain.

Communication Nation posts about a New technology for smart image resizing, developed by Shai Avidan and Ariel Shamir (Seam carving for content-aware image resizing, ACM Transactions on Graphics Volume 26 , Issue 3 (July 2007)) (here is a free pdf). The idea is to not just squeeze or crop the image as it is resized to fit (say) a browser window, but to remove less informative parts so that important objects and people remain.

It is a very cool technology that is surprisingly simple once explained (horisontal and vertical "seams" with minimal gradient information are removed. The seams are precomputed by running a dynamic programming method). Expanding the image can also be done using the same method, making image elements flow nicely. The movie is very convincing. It all seems to fit nicely with our cognitive system.

It made me nervous.

That images can be forged, edited and manipulated in numerous ways is nothing new. But this is realtime retouching, potentially making choices of what is important in an image. Imagine a photo in an online document using this technology. When fully expanded we will indeed see person Y between X and Z - no manipulation has been done to the original image. It is just that normally the browser window compresses the image and the page designer has made Y a preferred seam. We will tend to see X and Z, and likely never think of Y. This is a form of manipulation more subtle than just removing Y, since the designer can now truthfully say that the photo is authentic - the anti-Y activity is hidden in the seam data, which is just a display choice.

That images can be forged, edited and manipulated in numerous ways is nothing new. But this is realtime retouching, potentially making choices of what is important in an image. Imagine a photo in an online document using this technology. When fully expanded we will indeed see person Y between X and Z - no manipulation has been done to the original image. It is just that normally the browser window compresses the image and the page designer has made Y a preferred seam. We will tend to see X and Z, and likely never think of Y. This is a form of manipulation more subtle than just removing Y, since the designer can now truthfully say that the photo is authentic - the anti-Y activity is hidden in the seam data, which is just a display choice.

The expansion of images possible with this algorithm is also very impressive. But that added another worry: in the movies it was hard to see when we passed the size of the "real" picture and started to see generated data or when data was removed. The transition between real and unreal became seamless.

Maybe this is just me being oldfashioned and stuck in a modernist conception of objective reality. But an useful and elegant technology like this, apparently intended for inclusion in browsing, seems to make reality a bit too fluid. Or am I missing the point? Subjectively speaking we do this all the time in our brains anyway. We tend to overlook the boring stuff between the main objects in our visual field, we fill out most of the field with internally generated imagery and we often resize objects depending on saliency. Maybe we are simply breaking free of the old constraint that the canvas cannot grow or shrink. We are used to pictures remaining fixed in size and internal relations just because they couldn't change. Seam-carving makes images just as flexible as the world and our perception.

But I still think we are going to overlook Y on many websites.