June 30, 2009

My top 10 Frightening Scientific Papers

Here is my top 10 list of scientific papers that have frightened me. Some give unsettling insights in just how bad humans can be, others suggest that reason is helpless or that the world is dangerous in hard-to-fix ways. Perhaps a good list for a Halloween journal club meeting?

Here is my top 10 list of scientific papers that have frightened me. Some give unsettling insights in just how bad humans can be, others suggest that reason is helpless or that the world is dangerous in hard-to-fix ways. Perhaps a good list for a Halloween journal club meeting?

Honorable mention to Robin Hanson, who manages to get two papers onto the list!

- Stanley Milgram, Behavioral Study of Obedience. Journal of Abnormal and Social Psychology 67: 371378 (1963). A psychology classic, describing how normal people can be convinced to give what they think are lethal electric shocks to another person just because a man in a lab coat says so. My dad vividly told me about the experiment when I was a kid, and I had nightmares about it afterwards.

- Haney, C., Banks, W. C., & Zimbardo, P. G. (1973). Interpersonal dynamics in a simulated prison. International Journal of Criminology and Penology, 1, 6997. The infamous Stanford prison experiment. Another demonstration that it is quite possible to tease out cruelty from ordinary people.

- Christopher K. Hsee, Reid Hastie, Decision and experience: why don't

we choose what makes us happy? Trends in Cognitive Sciences, Volume

10, Issue 1, January 2006, Pages 31-37. This is a review of studies in well-being and happiness that show that humans systematically fail to predict or choose what maximizes their happiness. This is bad news for autonomous choice and trusting one's own desires and decisions. - Wolpert, D.H., Macready, W.G. (1997), "No Free Lunch Theorems for Optimization," IEEE Transactions on Evolutionary Computation 1, 67. (and related papers on the no free lunch theorem(s)) Shows that no algorithm is better than any other across all optimization problems. Since this includes practically any kind of rational thought (where we try to find the "best" solution) this is very depressing. Except of course that "all problems" covers an endless number of pathological and noisy problems - as long as we deal with the problems of a particular universe the theorems are not that bad.

- Dan M. Kahan, Paul Slovic, Donald Braman, John Gastil, Geoffrey L. Cohen. Affect, Values, and Nanotechnology Risk Perceptions: An Experimental Investigation. They demonstrated that people's attitudes to risks of new technology are not som much driven by knowledge about it but other cultural values, and polarize when given more information.

- Robin Hanson, Burning the Cosmic Commons: Evolutionary Strategies for Interstellar Colonization. A simple model of interstellar colonization that takes cultural evolution into account. The result is that colonizers will tend to evolve towards something akin to a locust swarm, using all resourcers for colonization and nothing for anything else.

- Robin Hanson, If uploads come first: The crack of a future dawn. Extropy 6;2 (1994). An analysis of the economic effects of copyable human capital. If it is possible to copy human minds into software, then there are strong economic reasons to suspect that there will soon be a population explosion of copies, pressing down wages while causing an economic expansion beyond anything seen before.

- John P. A. Ioannidis, Why Most Published Research Findings Are False, PLoS Medicine, August 2005. This paper quantifies the unreliability of scientific findings, showing that in some fields we should not trust individual papers at all.

- Tajfel, H. (1970). Experiments in intergroup discrimination. Scientific American, 223, 96-102. (and the subsequent papers on the minimal groups paradigm) By randomly placing people into groups, it is possible to get them to discriminate against outsiders (despite group membership being clearly arbitrary), discount information from outsiders and assume that they succeeded just because of luck.

- Ronald J. Jackson, Alistair J. Ramsay, Carina D. Christensen, Sandra Beaton, Diana F. Hall, and Ian A. Ramshaw. Expression of Mouse Interleukin-4 by a Recombinant Ectromelia Virus Suppresses Cytolytic Lymphocyte Responses and Overcomes Genetic Resistance to Mousepox. Journal of Virology, February 2001, p. 1205-1210, Vol. 75, No. 3. Describes an easy way to make a mousepox virus that overcomes resistance and is essentially 100% lethal. Could likely be adapted very easily to the human smallpox virus. While widely reported as a surprise, this paper argues that it could have been predicted - which would suggest that there might be different killer modifications for other viruses that can be predicted if one is so inclined.

Human Enhancement

Now the ETAG (European Technology Assessment Group) report on Human Enhancement is out. Well worth reading, and has some useful policy ideas.

Now the ETAG (European Technology Assessment Group) report on Human Enhancement is out. Well worth reading, and has some useful policy ideas.

Too tired myself after having run an enhancement workshop, one enhancement symposium and lectured at a conference about enhancement over the span of the last days to write much more.

June 22, 2009

Swine flu, black swans, and Geneva-eating dragons

This saturday I talked at ExtroBritannia about risk, or in particular, what statistics can tell us to worry/not worry about. As promised, here are the slides as PDF (6.5M)

This saturday I talked at ExtroBritannia about risk, or in particular, what statistics can tell us to worry/not worry about. As promised, here are the slides as PDF (6.5M)

In particular, the micromort measures I talked about come from this very instructional lecture.

For a good overview of how to handle power laws, see Power-law distributions in empirical data by Aaron Clauset, Cosma Rohilla Shalizi, M. E. J. Newman. A good technical book about this kind of extreme distributions is Critical Phenomena in Natural Sciences: Chaos, Fractals, Selforganization and Disorder: Concepts and Tools (Springer) by Didier Sornette.

June 18, 2009

Who was that masked blogger?

Practical Ethics: Sometimes justice wears a mask: blogging, anonymity and the open society - I blog about the Night Jack case, where a UK court allowed the Times to expose the identity of a police blogger, which led to him being disciplined (and his prize-winning blog deleted).

Practical Ethics: Sometimes justice wears a mask: blogging, anonymity and the open society - I blog about the Night Jack case, where a UK court allowed the Times to expose the identity of a police blogger, which led to him being disciplined (and his prize-winning blog deleted).

My core argument is that we need a bit of anonymity to guarantee adequate protection against formal and informal reprisal. Most communication works better if it is non-anonymous, but the kind of communication that needs anonymity is extra important to an open society. The chilling effects of the court ruling go completely against the many other protections for whistle-blowers in society.

The main problem with anonymity is of course that nyms have a harder time building credibility. I am becoming more and more convinced that an trusted third party identity service would be a good idea: you could document that you are who you are and that you have certain properties (being a policeman, holding a Ph.D. etc) with the service, which supplies you with a unique (and sole) authenticated nym. This allows others to check that you have the right credentials, but not to get your identity. Issues of subpoenas are left as an exercise for the law students :-)

June 16, 2009

Smarter, and hence safer?

Less Wrong: Intelligence enhancement as existential risk mitigation - Roko brings up the question of whether intelligence enhancement might improve our resiliency against existential risk.

June 15, 2009

Never do business or medical experiments with friends and family

Practical Ethics: Not better than the alternative: an informal experimentation tragedy - I blog about the case of Yolanda Cox, who died of anaphylactic shock after being given an experimental drug treatment by her family.

Practical Ethics: Not better than the alternative: an informal experimentation tragedy - I blog about the case of Yolanda Cox, who died of anaphylactic shock after being given an experimental drug treatment by her family.

While the media like to stress the life-extension angle of the drug, it was apparently intended to treat an ovarian condition.

In any case, the tragedy is a prime example of why it is risky to do experimental medicine on friends and family - the risk of groupthink and misplaced trust is great. Sometimes weak and formal links to other people protect us.

June 11, 2009

A road crammed with robots

I blog on Carwinism about the future of AI in cars: En väg full av robotar (in Swedish). In my opinion not that radical ideas (especially since The Economist beat me by a week), but the post got responses in GP, Allt om motor and HD.

I blog on Carwinism about the future of AI in cars: En väg full av robotar (in Swedish). In my opinion not that radical ideas (especially since The Economist beat me by a week), but the post got responses in GP, Allt om motor and HD.

My basic argument is that since sensors are becoming cheap, car automation is becoming standard and software is getting better cars will essentially become robotic. Not necessarily fully autonomous, but even a bit of networking and independence can have pretty big effects on traffic. We should also not expect humans to be the best possible drivers, so at least in some situations it might be rational (and possibly mandatory) to leave control to the machines. But convincing people about it is going to be tricky - we want to believe we are in control over our cars.

Maybe that is a cruicial problem for many "smart" technologies: the transition from tools to robots. We tend to think of the devices in terms of something that directly translates our actions or intentions into a result, with a strong causal link forward and an equally strong responsibility link backwards. When the device become autonomous we are just one influencing factor, and some actions of the device will not be due to us (and hence of uncertain responsibility). Since well established technologies have a social and legal context based on assumptions about these links, transitioning from tool to robot might be inhibited for old technologies. Computers were already billed as being semi-autonomous, so we have fewer problems with them acting up than we would be with cars.

June 09, 2009

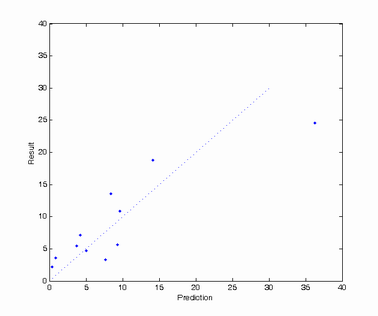

Calibrating my crystal ball

As a futurist, I should make sure to check past predictions to see whether I'm biased (or just bad at predicting). A month ago I wrote Andart: Using Google to predict elections. Now it is time to see how well my guesstimates came out.

| Party | Prediction | Result | Difference |

| Social Democratic Party | 36.3% | 24.6% | 11.7 |

| Moderate Party | 14.1% | 18.8% | -6.7 |

| Green Party | 9.6% | 10.9% | -1.3 |

| Left Party | 9.3% | 5.6% | 3.7 |

| Liberal People's Party | 8.4% | 13.6% | -5.2 |

| Sweden Democrats | 7.7% | 3.3% | 4.4 |

| Christian Democrats | 5.0% | 4.7% | 0.3 |

| Pirate Party | 4.2% | 7.1% | -2.9 |

| Centre Party | 3.7% | 5.5% | -1.8 |

| June List | 0.9% | 3.6% | -2.7 |

| Feminist Initiativ | 0.4% | 2.2% | -1.8 |

I can't see any bias in any particular direction. I don't think I have any chance of beating the exit polls, but at least the numbers are in the right ballpark.

(On the Aumann agreement board at FHI we had a probability scale on whether the Pirate Party would get a seat; my final position was at 60% probability.)

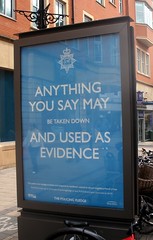

Precrime in Camden

Practical Ethics: Precrime in Camden: using DNA profiles for crime prevention - I blog about the claims from the UK police that DNA profiling of innocents is not just solving a lot of crimes but is so good at preventing them that it motivates arrests in order to get DNA samples. Unsurprisingly I am not convinced.

Practical Ethics: Precrime in Camden: using DNA profiles for crime prevention - I blog about the claims from the UK police that DNA profiling of innocents is not just solving a lot of crimes but is so good at preventing them that it motivates arrests in order to get DNA samples. Unsurprisingly I am not convinced.

The problem isn't DNA databases, it is that we allow sloppy use of them. We should demand ten times as much transparency and accountability from our governments than they should be allowed to demand from us.

June 05, 2009

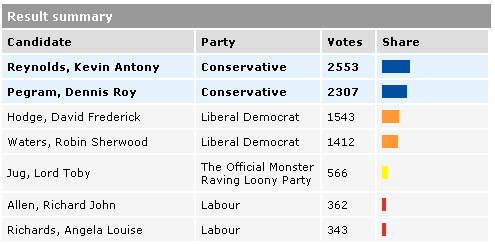

The Silly Party vs. the Sensible Party?

One can have bad elections, really bad elections... and elections where one comes in after the Official Monster Raving Loony Party. Labour just had one in St Ives. Oops.

Just more evidence that we do live in the world of Monty Python's Flying Circus. Or at least that the UK is part of the circus.

(via Bad Science)

Nasty, brutish and short

A new Science paper, Did Warfare Among Ancestral Hunter-Gatherers Affect the Evolution of Human Social Behaviors? -- Bowles 324 (5932): 1293 -- Science (Wired has a story), tries to estimate how strong the selection pressure for altruism was in the late Pleistocene and early Holocene. Be as it may with the model (which is interesting on its own), but the estimated frequency of conflict is pretty shocking. The fraction of total morality due to warfare is around 12-14%.

A new Science paper, Did Warfare Among Ancestral Hunter-Gatherers Affect the Evolution of Human Social Behaviors? -- Bowles 324 (5932): 1293 -- Science (Wired has a story), tries to estimate how strong the selection pressure for altruism was in the late Pleistocene and early Holocene. Be as it may with the model (which is interesting on its own), but the estimated frequency of conflict is pretty shocking. The fraction of total morality due to warfare is around 12-14%.

Anybody who claims hunter-gatherer lifestyle is optimal for humans should consider this number. The current worldwide frequency of violent deaths is 1.28%. Modern hunter-gatherer societies tend to be pretty violent too, but there could be confounding effects. There might of course have been entirely peaceful tribes, but most of the archaeological evidence looks pretty violent. As noted in the paper, the late Pleistocene climate volatility would have caused periodic resource scarcities and likely intergroup conflicts - even the hypothetical peaceful tribes would have risked coming into contact with a warlike tribe during such times.

The paper's point is pretty simple: in-group altruism can be pretty costly, so there has to be a sufficiently strong evolutionary pressure to keep it going (since the genes of the self-sacrificing hero have to spread using his less heroic family members). The tribal warfare helped fix altruism in us.

Maybe one reason we humans do have so much cooperation is this long era of nasty Malthusian living. If we had been living in a world where group-group violence had been impossible, there would likely have been less selection for in-group altruism. We would have been sneakier people, more likely to seize advantage over our fellows/competitors whenever opportunity presented itself. Maybe we would even have had goatees.

Disaster movies!

Now the videos from the talks of last summer's conference on global catastrophic risk are up! (don't ask why it has taken nearly a year...)

Now the videos from the talks of last summer's conference on global catastrophic risk are up! (don't ask why it has taken nearly a year...)

Everything from the sociology of millennialism to galactic collisions to bioterrorism and insurance. What I really liked about the conference was the sheer width of subjects, and the interesting ways they interacted.

June 04, 2009

Revealing mistake

I love when equations in movies, comics or adverts actually make sense. All too often they are nonsense and reveal that whoever drew them did not even look into an ordinary textbook. Here is an advert where things are almost right (found via scaryideas.com) that I find pretty amusing. (click to magnify)

I love when equations in movies, comics or adverts actually make sense. All too often they are nonsense and reveal that whoever drew them did not even look into an ordinary textbook. Here is an advert where things are almost right (found via scaryideas.com) that I find pretty amusing. (click to magnify)

The equations on the nail are correct, but written in LaTeX. The advertising agency did check in some textbook, but actually wrote out the typesetting language rather than the equations as they would look in print. While I can imagine someone using the caret instead of superscript to fit a formula on a line, nobody writes "\cos x". Unless of course the exam the nail is intended to cheat is an LaTeX exam.

June 02, 2009

Getting out of the ecostalinist car

I did a talk about "values for the future" at the Hay-on-Wye festival. The speaker before me brought up the climate change issue, and argued that in order to reach the 90% emission reduction he though was necessary we should give up those misguided ideas about individual freedom and independence we have held since the Enlightenment.

I did a talk about "values for the future" at the Hay-on-Wye festival. The speaker before me brought up the climate change issue, and argued that in order to reach the 90% emission reduction he though was necessary we should give up those misguided ideas about individual freedom and independence we have held since the Enlightenment.

I countered by pointing out that we might need something like 120% emissions reductions, and we might actually need those oldfashioned atomistic values even more.

As Eric Drexler blogs in Greenhouse Gases and Advanced Nanotechnology, most people make the mistake of thinking CO2 will just disappear if we stop producing it. Instead it remains in the atmosphere over a century timescale, affecting the heat balance. If one is a believer in that there are already sinister effects on biosphere, and especially if one is concerned with tipping points and positive feedback loops, then this is a very serious matter. Just urging (or achieving) emissions reductions will not fix the problem. There has to be very large carbon sinks, or one should argue for much bigger attempts at climate adaptation than currently is fashionable.

As I like to say, if you are in a car rolling downhill and want to stop, it is not enough to just stop pressing the gas pedal, you better find the brakes, keep a hand on the steering wheel and wear your safety belt. A lot of past rhetoric has been of the type: "For heaven's sake, stop reaching for the brakes! They will distract you from not pushing the gas pedal! Or they might cause a skid!"

It was fun to see my audience when I explained that probably the choice might be climate change or widespread use of carbon sequestering GMOs (while I like Drexler's approach, it may take a while to get there; we could seriously start working on ultra-sequestering plants right now, I think). They didn't like that at all. Because largely, to their minds, climate change is just politics: get the politicians to make the right decisions, expected to be roughly some kind of anti-consumerist program that brings out the nice old values of community, environment and austerity.

The real risk is of course that climate change becomes ecostalinism instead. One common theme in the discussion was how irrational and selfish people are, so it would be a good thing if this short-sighted behaviour gets inhibited by suitable authorities with long-term interests. Democratically controlled and accountable, of course.

But the problem with everybody unifying to deal with a great threat, temporarily setting aside frivolous interests and rights that otherwise might be important, is that such a unification can not come from the outside. It has to a large degree be internalized, a part of the current culture. Our effective values should change when we are faced with a threat - other things have priority. But what does this do to deliberation, dissent and exploration? The War on Terror has already demonstrated not only how authorities can use it as an excuse to make themselves unaccountable but how internalisation of the fundamental idea of being at war with extremism has produced a mindset that finds expressing dissent with "how things are done" to be tantamount to treason.

It is all too easy to imagine the same thing in case of climate change. My previous speaker likened climate change denialists to flat earthers and creationists. Stupid and evil. But what about expressing scepticism over the conclusions made in IPCC reports or suggested courses of action? I have already seen far too many examples of how people expressing scepticism over something (for example the often rickety models linking climate to GDP in future economies, whether climate refugees will actually matter at all or pointing out the absurdity of many claims about ice melting) being accused of being "denialists". If we make climate the core of our political discourse, then it is very likely that any form of publicly expressed scepticism or criticism of any part of the edifice - built as much on ideology, politics and culture as on science - will be seen as disloyal and evil. At best it will be ignored and groupthink will rule, at worst it will lead to imprisonment (Australian commentator Margo Kingston has argued that "climate change denial" should be illegal just like holocaust denial - if one really thinks the stakes is human survival, then this actually makes sense). The problem is of course that stifling dissent will ensure that science will also be stifled (don't you *dare* find evidence against our current methods!), that mistakes and corruption will not be adequately reported and that in the long run the whole project is likely to diverge from reality. Not the best outcome for the biosphere or mankind's interests.

The real way forward is to stimulate exploration and dissent in order to figure out more about the situation. The current orthodoxies (that consumerism is the problem, that geoengineering and serious adaptation are out of the question, that only traditional technology is relevant etc.) are likely wrong at least in part and need challenging. If we all need to pull together, then we also need really good information to guide us and not just faith in authorities. For this we need strong protections for dissent - the social pressure towards conformity is going to be strong in any case. We mustn't loose the critical voices that point out that certain emperors lack clothing or that we ought to cover Wales with GMOs. They might be wrong, but they protect us from the overconfidence in planning we know has wrecked past projects and states. To give them up is to close one's eyes and let the car roll out of control.