April 25, 2007

A Right to Know

This week on CNE Health I blog about whether doctors should tell their patients about treatments that are not covered by third party payers. Two articles in the BMJ bring up this issue, arguing for or against. I think the disclosure side has far better arguments, and given the comments to the articles the readers of BMJ seem to agree.

Daniel K. Sokol also has a good article in the same issue, pointing out a few good heuristics for helping patient autonomy. I liked the idea of "thinking out loud" when answering the patient question "what would you do?". That shows respect and invites discourse, especially since differences in evaluation and goals become more clear.

Google Sky

WIKISKY.ORG is the answer to Google Earth, a zoomable sky map that includes not only catalogued stars but also sky surveys in visible light and infrared, as well as a photo collection and a wiki. Given time (and interest from enough amateur astronomers) this could become a tremendous resource. It is already great fun and quite beautiful.

The Sloan Digital Sky Survey data is impressive, although there are many graphical artefacts that will no doubt keep the UFOlogists confabulating. I think the IRAS data is even more impressive, showing our galaxy as a glowing band of gas filled with shockwaves, bubbles, hotspots and globs. It feels almost like an anatomy textbook. Each object also has its own wiki, with related pictures and articles.

April 23, 2007

Science that Matters

Science That Matters is a blog with a nice idea: to post about scientific studies that really matter.

I was especially struck by The power of hope, an entry that picks apart the claims of the "Pygmalion effect" of schoolshildren doing better when their teachers are told they would "bloom" intellectually. It turns out that that was not the result at all, but the good story got wings and spread, while the facts got left behind. A bit like how we can still hear about flatworm memory or the hundreth monkey. We really need blogs that both poke holes in this kind of factoids, as well as point out the significant research.

April 22, 2007

The Axis of Evil: Traditional Leaders in Nanotechnology since 1000 BC!

According to Irans Cultural Heritage News Agency Iranians Enjoyed Nanotechnology 3000 Years Ago (via Nanowerk).

According to Irans Cultural Heritage News Agency Iranians Enjoyed Nanotechnology 3000 Years Ago (via Nanowerk).

The nanotechnology appears to be nanoparticles in paint (wow! just like nanoparticles in soot!) and nanoparticles "which attract harmful rays from mobiles which are employed in structures" (apparently Cyrus the Great used cellphones as construction material).

These findings remind me of North Korea's nanotech toothbrushes, nano-lubrication and nano-water improver (scroll down to middle of page). I can't find the report I read a few years ago about how a traditional stone remedy (?) had nanomedical properties.

(Korean News is an unintentionally hillarious yet sinister 'news' source. What about: "Kim Jong Il Sends Books to Grand People's Study House", where the donated books include Microsoft Windows 2000 Security Guide and Potato Food. "Scientific and Technological Presentation Held" proudly reports about reasearch into the immortal flowers "Kimilsungia" and "Kimjongilia". "U.S. Move Seeking Preemptive Attack Strategy Lambasted". And ominiously, "measures have been taken not to allow a single AIDS case in North Korea").

It is easy to make fun of this kind of breathless nationalist promotion. If nanotechnology is important, then we must have had it for ages since we are such a great nation! Part of it is of course the bad translations, but it is also the earnestness that gets us cynical westerners to smile. That humorless yet passionate approach does not work with our memetic antibodies.

It also is curious to see how nationalism makes a hash of ideological consistency. This nano-news and the recent Iranian criticism of 300 (and the Alexander movie) suggests that Iran thinks its pre-islamic past is so great that it must be defended from criticism (or merely dumb movies), while pre-islamic communities are persecuted. And apparently North Korea, despite being world leaders in everything, needs more researchers.

So what's next? Hugo Chavez revealing ancient Venezuelan micromachines?

April 21, 2007

Outsmarting the Great Engineer

Nick Bostrom and I have written a book chapter, The Wisdom of Nature: An Evolutionary Heuristic for Human Enhancement on the application of evolutionary biology to human enhancement.

Nick Bostrom and I have written a book chapter, The Wisdom of Nature: An Evolutionary Heuristic for Human Enhancement on the application of evolutionary biology to human enhancement.

The basic argument is simple: when you propose an enhancement, someone is bound to ask "if that's such a good thing, why haven't nature already given us the trait?" And it is a relevant question: evolution is a great engineer in the sense that it produces organisms that function well (never mind the piles of bones on the workshop floor). It might be hard to improve on quasi-perfection.

Our paper looks at ways evolution might not give us what we want. It could be that evolution designed us for an environment we are no longer living in - keeping down brain energy requirements made sense when food was scarce, but today we should rather tweak our metabolic setpoints down to avoid obesity, despite the disadvantage that and large brains would have been in the Pleistocene.

Another reason why evolution might not have done what we want is simply that evolution doesn't optimize things after human values - contraception is a great thing for people, but from an evolutionary fitness perspective it is not anything evolution would have aimed for.

Finally there are a large number of things that evolution cannot do. Some things are impossible to do with our kind of biology or surrounded by vast deserts in the fitness landscape - diamond skeletons and radios are hard to evolve. Sometimes we get trapped in local optima, such as the appendix (evolving a smaller one increases the risk of appendicitis, despite that having an appedix in itself appears to be a slight fitness disadvantage). Evolution may also have a hard time keeping up with changing conditions.

All in all, if a proposed enhancement looks like it fits one of these possibilities we have a good reason to think that we know why nature did not give it to us. If we can't find any such explanation or if we get contradictory results, then it is a signal for caution. For example life extension gets a green light (evolution does not select for post-reproductory life strongly, antagonistic pleiotropy, differing human values etc) although this by no means shows that it will be easy to do. Removing sleep on the other hand looks like a red light (obvious disadvantage in terms of predation, no function known for certainty, yet strongly conserved across a big chunk of the animal kingdom).

The chapter is still being revised, and Nick and I would like to hear your comments. If you have read it and would like to comment, email anders.sandberg at philosophy.ox.ac.uk.

April 19, 2007

Creative Destruction

This week on CNE I blogged about the evolutionary genetics of cancer. I have always thought there is something tremendously cool about how cancer cells are undergoing rapid evolution - destructive for the body, but a creative process for the cancer cells.

This week on CNE I blogged about the evolutionary genetics of cancer. I have always thought there is something tremendously cool about how cancer cells are undergoing rapid evolution - destructive for the body, but a creative process for the cancer cells.

Speaking of creative destruction, one implication seems to be that if the NIH succeeds in making cancer merely a serious chronic disease by 2015 we will get a lot of chronically ill people. Add to this life extension, better metabolic control (diabetes) and that it may be hard to prevent many illnesses for purely social reasons. Chronic illness does not necessarily mean a low quality of life or even ill health. It is largely a state of having a condition that needs to be monitored and possibly controlled. But the current health care system is based on the assumptions of the sick being a minority that mostly become cured or die, with a small group of chronic patients. What if chronic illness (broadly defined, including medicalization of bad memory and addiction risk as well as genetic predispositions for various states) becomes the majority?

In such a world practically everyone wants and needs healthcare, but it can hardly be supplied from centralized orgamisations that have fixed prices. There are simply too many patients for the artificially low (due to very rigorous certification) number of health care professionals. Running it all by tax money or insurance money seems unlikely to work, especially since if there is a price ceiling people will likely overconsume. It doesn't take much overconsumption of healthcare if it is done by nearly everybody to completely bankrupt the system.

The solution I believe in is to try to get last week's CNE entry, supply side medicine to work. We need medical entrepreneurs that compete (keeping down prices) and innovate (meeting the new kinds of demand). That of course requires enabling patient choice, for example by voucher systems. I expect that McClinics will grow in number and sophistication; maybe we will also see Oncology King next to them?

April 12, 2007

Supply Side Medicine

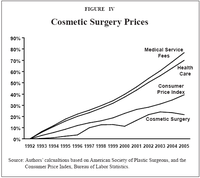

This week on CNE I blog about why medicine is so backwards. John C. Goodman makes the point in his paper that diffusion of technologies such as telephones, email and electronic records have been extremely slow in medicine compared to other professions. The explanation he gives is the lack of incentives to innovate, and points at the far more dynamic situation in cosmetic surgery and lasik.

This week on CNE I blog about why medicine is so backwards. John C. Goodman makes the point in his paper that diffusion of technologies such as telephones, email and electronic records have been extremely slow in medicine compared to other professions. The explanation he gives is the lack of incentives to innovate, and points at the far more dynamic situation in cosmetic surgery and lasik.

His graph of the cost of cosmetic surgery is interesting, because it suggests that thanks to competition and price transparency it has become cheaper over time. This is cheering not just from an aesthetic perspective, but also from an human enhancement equality perspective. One of my main worries has been that the prices of service-based enhancements will be high and remain high, possibly causing social friction (gadgets and drugs become cheaper over time). But this shows that the price does not have to remain high if there is enough competition. Given that enhancements are somewhat likely to be outside the traditional healthcare system anyway and closer to elective surgery, this might be good social news.

April 11, 2007

Critical Life Path

Media A (via via Visual Complexity) has a fascinating diagram of events in the life of a fictional designer living from 1990-2090. It mixes personal development ("Learn early networking skills at kindergarten", "Go back to school and study nanotechnology") and trends ("Child plays with HAM II: mother-in-law objects", "24-hour business and recreation hubs", "Prada fashion and Monsanto agribusiness join forces for hostile takeover of big corporation"), showing how they affect each other.

Media A (via via Visual Complexity) has a fascinating diagram of events in the life of a fictional designer living from 1990-2090. It mixes personal development ("Learn early networking skills at kindergarten", "Go back to school and study nanotechnology") and trends ("Child plays with HAM II: mother-in-law objects", "24-hour business and recreation hubs", "Prada fashion and Monsanto agribusiness join forces for hostile takeover of big corporation"), showing how they affect each other.

It is a mixture between plausible possibilities and bisarre wildcards, which makes it ring very true. Airport hub universities and pausing groups sounds like good ideas already. Memory telescopes, Greenpeace reincorporating as Greenpeace Innovation Design Ltd, business models for design in multiple universes, why not? Weirder things have happened. Most future speculation tries to be grounded in known facts and trends, which makes it miss the totally new trends. This design-biased play seems to come up with several good ideas.

Maybe this kind of inventive life map is something we all ought to do? Based on what we think is likely or possible, we should draw possible future lives. Maybe What If could be another inspiration. Another interesting approach is Dandelife, where people can enter their life stories. That is intended for Web 2.0 autobiography, but it could easily be extended for making potential or proactive autobiographies.

As for myself, I have known since I was about 5 that I'm going to end up as a planetary information network.

April 09, 2007

Reinventing RPGs

Supergänget by Tobias Radesäter is a nice example of form and function fitting perfectly. It is a little Swedish superhero RPG written in cartoon form. It is a short (28 pages), simple game intended and suited for short games.

Supergänget by Tobias Radesäter is a nice example of form and function fitting perfectly. It is a little Swedish superhero RPG written in cartoon form. It is a short (28 pages), simple game intended and suited for short games.

What really delighted me was self-referential style. The cartoon describes how the players at a RPG session sneak away to act as costumed crimefighters (which is also how game sessions are intended to start in-game). The GM walks away to his secret identity as the game designer, explaining the game while the plot goes on. It all reminds me of Scott McCloud's relaxed walks through his comics.

Roleplaying games have never become very stuck in any fixed format, despite the popularity of various rules-heavy systems. There is both an obvious freedom to select new settings and ways of roleplaying them, and rule systems are explicitly visible to players and GMs alike. This invites tinkering and experimentation far more than other kinds of games where consistency of rules is paramount. Again, roleplaying games tend by their nature as multilayered fiction to develop self-referential and self-modifying loops. Be it the designer considerations stated in the open in Skymningshem: Andra Imperiet or the deliberate self reference metaplot in Over the Edge, the subtle parody of HackMaster or the self-critical rants Violence, roleplaying games are not afraid of tearing down the fourth wall.

Cubic Terms Make Smart People Bankrupt

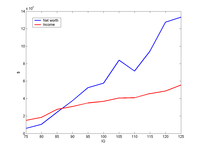

I recently came across the paper Do you have to be smart to be rich? The impact of IQ on wealth, income and financial distress by J L Zagorsky (Intelligence, in press). The subject is interesting: does higher intelligence increase income and saved wealth, and does it protect from making bad financial decisions? Zagorsky reaches the counterintuitive conclusion that it does not improve overall wealth despite increasing income (the income part was known before), and that there is a nonlinear relationship between IQ and being in financial distress: being smarter does not reliably make you economically wiser.

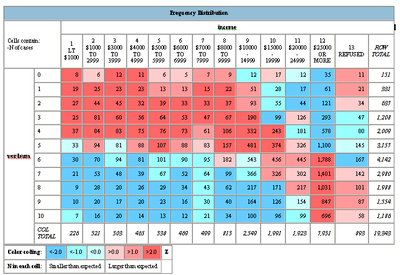

Data from table 2, showing median income and net worth. The medians are at least increasing!

These conclusions would have been good if they had not been marred by "machine thinking". There was frequent references to which piece of software that was being used, as if this would be relevant when the statistical methods were stated. A number of regressions were done using multiple methods, for the stated purpose of dealing with the skewedness of financial data. But if financial data are too skew, wouldn't log data work better? I got the impression of the author simply using the entire toolbox because it was there. Using several different kinds of regressions doesn't give much information to compare with and increases the chances of spurious significance. A better approach might be something along the lines of Sala-I-Martin's "millions of regressions" method where the distribution of coefficients of a particular variable is analysed when different sets are included in the regression. This gets around the risk of handpicking explanatory variables.

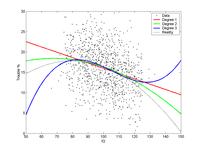

The most irritating case was the financial distress analysis where a third degree polynomial was fitted because: "First, by using Excel's chart trend-line function, a polynomial of order 3 visually best fits the data series shown in Table 5." Here is the data, I leave it to the viewer to determine whether this really looks like a third degree curve:

"Second, a common way of ranking competing logistic models is comparing their Akaike's information criteria (AIC) (Akaike, 1974). The model with the smallest AIC fits the data best. Starting from a linear model the AIC falls until IQ cubed is added, but then it rises sharply once IQ raised to the fourth power is included." Here the author does not explain how AIC was applied, but given the skewness of financial data that does not appear to be controlled, one cannot assume normal errors and that may quite possibly give skewed results too if the software assumes normality.

The resulting conclusion that high IQ people may be more likely to suffer financial trouble seems to be due to the cubic term. Zagorsky tries a number of explanations:

"One explanation is that higher IQ score individuals might

be busier and less focused on routines like paying bills. Another explanation is those with a higher IQ score might lead a life-style that is closer to the financial precipice because they feel they are smart enough to calculate and understand all relevant factors. A third explanation is that smarter people have a better memory and are more likely to remember mistakes."

There is probably some truth to this. But a far simpler explanation is that it is a case of overfitting.

A fitted high degree polynomial will rush towards (positive or negative) infinity outside the interval of points it has been fitted to. The financial data seems to be mostly in the interval 75-125 (as would be expected from a normal population) but is extrapolated up to 140 in table 7. No wonder the risks start increasing!

Here is a toy example, where I simulated 1000 individuals with IQs 75-125, with a trouble probability that was a simple parabolic curve plus noise. I fitted a linear, quadratic and cubic curve to them.

The blue cubic curve suffers from clear overfitting. Zagorsky's regression is admittedly different, but the evidence for a need of third degree polynomials does not look that strong.

In the end, this paper is a good example of why we should not let our software do our scientific thinking. Research is not just about getting a dataset, plugging it into a statistical model and coming up with an explanation of why the result is plausible.

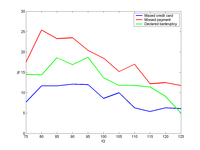

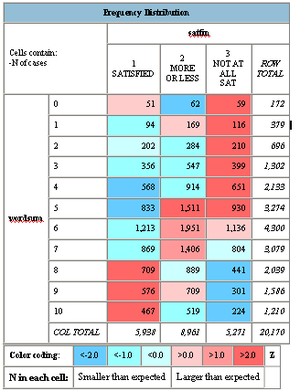

Still, what is not enough for a scientific paper may be enough for a blog. I took the WORDSUM data from the GSS as a proxy for intelligence, and plotted the frequency distribution of INCOME.

There seems to be a pretty solid link between having more than 4-5 points of WORDSUM and getting a better income. Unfortunately I did not find any variables useful to estimate savings, so we cannot check the most interesting issue in Zagorsky's paper. Plotting whether people feel they are satisfied with their financial situation showed a clear trend:

Being above average seems to make you more satisfied. maybe this is just because of the higher income, but I would suspect savings do play a role.

There are variables representing economic trouble, and when summed together and plotted with WORDSUM we get this:

Not much evidence for more trouble at the high end, but it looks like some more financial distress in the lower mid part of the intelligence gaussian. This is where there are enough people to get some data, they have reasonably large incomes, and they might be stupid from time to time. There is also a pretty strong anticorrelation (-0.38) between WORDSUM and thinking that there is no point in planning, that one has little control over bad events and good events are mostly luck (I added BADBRKS, LITCNTRL, MOSTLUCK, NOPLAN). That kind of fatalism and external locus of control seems pretty likely to predispose to financial troubles and low saving.

April 04, 2007

Medical Phones

This week I blogged on CNE about using cellphones for medical records.

The work done at the Quinn Lab is pretty fun: they turn cellphones into visualisation devices. While the medical applications are useful and impressive (and no doubt gives grant money) my bet is that interfacing with GIS might be even more of a killer app.

As soon as enough processing is in the phones the real bottleneck becomes the network and information access. A patient record system has to do authentification, privacy monitoring and ensure quality of service - not insurmountable, but making the basic system so flexible that it can be accessed using mobile devices might be tricky from both an architectural and legal standpoint. I'm worried that electronic health records may become so regulated that they can never be more than software pieces of paper.

Of course, as demonstrated by mp3 players and the iPod, sometimes alternative institutions develop outside of regulation and eventually forces a change.

Denying Denial

A little essay I wrote on why it is a bad idea to ban holocaust denial was posted on the Eudoxa site.

A little essay I wrote on why it is a bad idea to ban holocaust denial was posted on the Eudoxa site.

The basic argument is traditional J.S. Mill (and David Brin; his The Transparent society is still the most accessible and clear explanation of just why we ought to strive for an open society I have seen). Banning certain propositions hurts the epistemic underpinnings of society, and given our inability to distinguish hurtful speech acts from hurtful truths we better allow both.

I think most genocide denial bans are simply laziness - a way to avoid debate. Instead of trying to show how wrong and misguided the deniers are and point out real evidence (takes effort) they just silence debate. But silencing public debates does not prevent views from spreading, and in our underdog-cheering culture it might actually help these "banned" views.

As neuroimaging develops it will become increasingly possible to check whether people actually hold certain views. If some views are declared beyond the pale even in democratic societies, what does that mean for freedom of thought?