September 09, 2009

Join the Cloud

I participated in the Cloud Intelligence Symposium at the "Human Nature" Ars Electronica Festival 2009. It was a very fun event that linked rather theoretical considerations by me, Stephen Downes, and Ethan Zuckerman with very real-world applications and activism by Isaac Mao, Hamid Tehrani, Xiao Qiang, Evgeny Morozov, Kristen Taylor, Teddy Ruge, Pablo Flores, Andrés Monroy-Hernández, and Juliana Rotich.

I participated in the Cloud Intelligence Symposium at the "Human Nature" Ars Electronica Festival 2009. It was a very fun event that linked rather theoretical considerations by me, Stephen Downes, and Ethan Zuckerman with very real-world applications and activism by Isaac Mao, Hamid Tehrani, Xiao Qiang, Evgeny Morozov, Kristen Taylor, Teddy Ruge, Pablo Flores, Andrés Monroy-Hernández, and Juliana Rotich.

Audio and video of our talks can be downloaded here. Presentations are appearing on Slideshare. There is a web page for cloud intelligence. During the talks we, the audience and the net twittered continuously (tag #arscloud) - yes, now I am part of the twittering masses too.

Cloud Superintelligence

My slides can be found here. My talk was about the question why groups of people can be so smart (consider ARG solving teams or a market), yet they obviously also can be far less intelligent than they could be (consider national foreign policy, market bubbles etc). Part of it is how the group solves the internal communication problems - better organisation produces greater benefits than just making individuals better, and allows bigger groups. This can be done formally, as with a company, or informally using self-organisation or just shaping by the right medium.

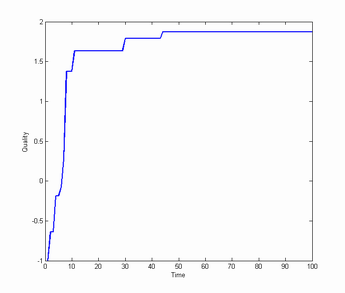

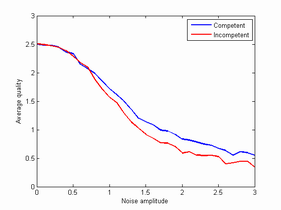

A simple example is how Wikipedia page quality may develop. Imagine that people of random competence (normally distributed) visit a page, and if they find the page to be worse than their competence rewrite it to their level. The effect is a gradual improvement (growing roughly as sqrt(log(n))).

This works even if people have a noisy estimation of their own competence (or the page's quality): even if the noise is two standard deviations there will be almost one deviation improvement in quality after 100 visitors. Adding incompetent agents who don't recognize that their basic skill is below average still doesn't change things much! The system is pretty robustly self-correcting. You don't need many agents to get a big improvement at the start, but you want the other agents to maintain the quality.

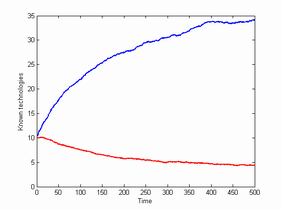

Another reason why big groups are good is that they can maintain more knowledge and competence than small groups. Consider the model where each agent in a group knows three technologies, and agents randomly die and are replaced by new agents who learn new technologies from random elders. If inventions occur at a slow rate, a small population will lose its technologies. Larger populations not only maintain them, they build up a bigger repertoire over time.

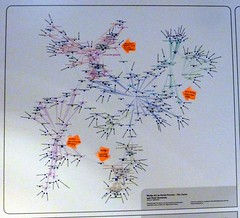

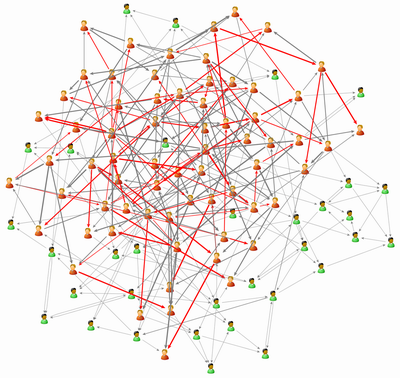

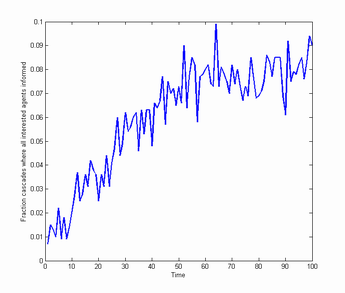

My third model is a model of blogs. Agents read a limited number of information sources and have certain interess. Random agents post stories based on their interests. If one agent that is read has a piece of interesting news, that source is regarded as more useful by the agent reading it. Agents write about the interesting news with a certain probability, creating information cascades. Over time agents randomly shift to other sources if one proves useless (e.g. no interesting news from that agent in 1000 iterations).

It turns out that the agents become better and better at distributing news. At first most agents will be isolated from agents sharing their interests, but over time communities develop. These in turn act as "antennas" helping agents get news even though they have a limited number of inputs - the blogosphere helps overcome attention problems.

Overall, the right kind of organisation can make agents much smarter. It is also possible to augment it by "superknowledge": using big databases like Google or (my favourite) 80 million tiny images: enough data or knowledge can itself substitute for intelligence in certain ways.

My conclusion was that we are already in an era where posthuman superorganisms are running things - we just know them as markets, nations, companies or blogging cliques. However, so far these superorganisms have evolved by trial and error, and often not in the direction of greater intelligence (just consider how cognitive biases are often built into or promoted in institutions for reasons that make sense on the individual human level but make the institution pretty incompetent). With new media and new awareness of distributed cognition we might actually start seeing much smarter cloud intelligences. And we will be in them.

Posted by Anders3 at September 9, 2009 04:18 PM