October 07, 2006

Warning Signs for Tomorrow

The Book of Ratings rates danger symbols (via Ian Albert). Very enjoyable, and brings up the question of how to mark new threats. All the truly cool transhuman technologies are going to require warning signs.

The Book of Ratings rates danger symbols (via Ian Albert). Very enjoyable, and brings up the question of how to mark new threats. All the truly cool transhuman technologies are going to require warning signs.

I have added larger versions of these signs to my Flickr photostream.

Maybe we should define a RFID standard for this kind of hazard warnings, if it doesn't already exist? Every container or dangerous object has a RFID tag that enables a "danger detector" to tell that there is a particular problem nearby. It sounds like a reasonable extension of PML. Of course, a warning for "RFID reception impaired" might be in trouble. However, there is a need for signs to tell meddling monkeys to keep away. There are a few obvious concerns: the signs should be clear, easily recognizable, not reliant on color vision and so on. But as the ratings show, the symbols are rather arbitrary.

Note the wall in the second frame for some examples. The second sign from the left is a warning against naked singularities (better depicted in some later strip). The ghost warning is obvious (but a bit strange given Kevyns rationalism during the haunted starship storyline). The DNA helix suggests some alternative to the (IMHO great) biohazard symbol, or that it was too hard to draw. Maybe it is about threats to the genome.

The singularity tornado sign is a good one. It can be used to mark any object with dangerous spacetime metric properties, be it tidal forces, event horizons or bad topology. However, the tornado suggests the erroneous embedding view of space-time, so a better version might just show a spiralling shape. Of course, purists will point out that orbits around black holes are just as stable as around any other mass they dont suck things in without some friction mechanism and that many other nasty metrics are not even symmetric. But some physical realism ought to be sacrificed for visual saliency. A black spiral signals an obvious, dynamical danger.

Antimatter is another obvious danger. My symbol is intended to both remind of a Penning trap (for early applications where we just have a few antiprotons) and a starlike explosion (for bigger amounts). It is also in reverse, to hint at the anti-aspect of antimatter.

Chaos control is likely to be very useful in many future applications. But chaos is sensitive, so interfering with such a system might be unadvisable.

Macroscale quantum systems are likely not personally dangerous, but interfering with them will of course disrupt whatever they are doing. Of course, just seeing the warning might be enough to decohere them. Some might interpret the psi sign as a warning for psychic powers. Maybe the sign could show an alive/dead cat instead, but it is a bit too much of an in-joke. People should learn what symbol is used for the wave function.

If strangelets are stable at zero pressure and have a positive charge, they can absorb ordinary matter and convert it into more strangelets. A great energy source, but also potentially a planet- or star-eater.

Whether nanoparticles are dangerous or not depends likely on their size, composition, surface properties and what system they get into. But it seems reasonable to have a marker for generic nanoparticle problems.

As nanosystems become more common we might need to make their activity visible. There is nothing as worrying as invisible technology that might or might not be there, acting quietly. Hence active nanodevices ought to be shown, either by a warning sign or through some aspect of their activity (color, light etc). Doctorow and Stross had a fun idea for medical nanodevices in Jury Service, where they mark the person being rebuilt with small biohazard signs.

Diamondoids are likely to be very useful, but can be pretty risky in some forms. Bulk diamond shards for example are extremely sharp and unlikely to wear down quickly. The hardness and slipperiness of a perfect diamondoid surface might also pose risks.

Self-replicating devices are potentially the most powerful technology of all, since they can increase their reach exponentially in suitable environments. It also raises risks of arms races (whoever builds and releases one first wins), unwanted replication (the sorcerers apprentice) and slight misbehaviour of the devices that becomes dangerous as they become numerous.

Autonomous devices may start acting at any time because they want to. While they might have safety programming this is nothing that can be taken for granted. Im rather happy with the symbol, using the square as an icon for device, the eye as a sign of some form of intelligence or environmental sensitivity, and the arrow for action.

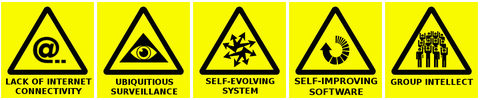

Lack of internet connectivity: today a nuisance, tomorrow a serious problem and possibly even damage risk. As our exoselves become more and more dynamic and linked to our biological minds a loss of connectivity could cause breakups of our extended minds.

Using microdevices, identity technology and pattern recognition software it is not inconceivable that future environments will be 100% privacy-free. If this is not the standard situation but only occurs in some places, this sign would mark the surveilled area. In the case of total surveillance the opposite sign, with a crossed over or blindfolded pyramid, would mark the risky non-observed areas.

A system that evolves freely is potentially very adaptable and creative. It could also become nearly anything, with consequences ranging from the annoying to the disastrous. It is likely unlimited self-evolution will need to be contained carefully even as we mine it for truly new inventions. The arrows nicely hint at a chaos-star as well as replication.

Systems that might launch a hard takeoff where they rapidly become smarter and more capable require care. Even software that doesnt aim at intelligence might still behave unexpectedly, requiring a warning.

Group intellects: dont go too close, or resistance will be futile

While well-behaved group minds no doubt are selective of who joins (it is after all rather intimate) and unlikely to assimilate everybody nearby, there might be applications or situations where mental firewalls are down and brains easily form group intellects. Maybe the people in the icon should all be raising their hands in the same way, but this is the clipart I found.

Memetic hazards: a black lightbulb to represent really bad ideas. Compare with the Science Related Memetic Disorder in A Miracle of Science. Of course, the line between preventing viral bad ideas from spreading and censorship is a fine one.

Motivation hazards: as we learn to affect our brains better there is an increased risk for addictions, gain pleasure from something harmful or that we edit ourselves to like our current state no matter what. The poppy represents such motivation traps.

Exactly whatother kinds of hazards could occur with mature cognotechnologies is hard to imagine. The staircase sign represents a general hazard, perhaps the induction of inconsistent beliefs, infinite loops or mistaken perception.

Finally, a catch-all sign for things you really dont want to mess with existential risks implies threats to the future of humanity as a whole.

Size of danger

For a roleplaying game I came up with a system of signs denoting the size of the threat rather than its type. In that setting I assumed that mankind was spread out across several solar systems, so a species level threat needed to affect all the systems (gamma ray bursts, invasion of enemy aliens). Culture level threats represent dangers to a single system (self-replicating machines, a local nova). Ecosystem level threats could wipe out the biosphere and/or tehcnosphere of a planet. Such threats would be species level threats to us today: large meteors, permanent climate destabilization, ecophagous nanomachines. Nation level threats risk continent-sized destruction or smaller: nuclear war, biological attacks etc.

Perhaps this ranking can be extended. A natural scale would be the logarithm of the number of threatened people.

- Level 0 threats: all humans threatened. True existential threats.

- Level 1 threats: around 10% of all humans threatened. Continent-sized threats at present.

- Level 2 threats: 1% of all humans threatened.

- Level 3 threats: 0.1% of all humans threatened

- Level 4 threats: 0.01% of all humans threatened

- Level 5 threats: 0.001% of all humans threatened

- Level 6 threats: 0.0001% of all humans threatened

- Level 7 threats: 0.00001% of all humans threatened. At present <1000 people.

- Level 8 threats: 0.000001% of all humans threatened. At present groups <100 people.

- Level 9 threats: one billionth of humanity threatened. Individuals and small groups.

- Level 10: no humans threatened, but other values (such as unchanged biosphere, aesthetics or economy threatened).

Maybe the scale makes better sense in the other direction, with normal threats being level 1 and more serious threat as a higher level, ending with level 9 and 10 threats. But this way it acts as a countdown, making it explicit that the scale looks at the threat compared to the total size of humanity.

In my sf setting the signs had color (as well as text) depending on the level of threat. One could perhaps change the warning-yellow background depending on the threat level, but it would likely be bad for recognition. A better way might be to just have a color marker and some text underneath the warning sign. "SELF-REPLICATING DEVICE. LEVEL 0 THREAT: GLOBAL DANGER. DO NOT MESS WITH"

It might also be possible to add a marker or border showing the probability of the threat happening if one disobeys the warning. Again this could be marked using a logarithmic scale:

- Infrared (black): 100% probability

- Red: 10%

- Orange: 1%

- Yellow: 0.1%

- Green 0.01%

- Turquoise 0.001%

- Blue: 0.0001%

- Indigo: 0.00001%

- Violet: 0.000001%

However, this might both give people a feeling of licence (It is just 1% chance that something will go wrong) and quickly runs out of colours.

Another addition would be to add an outrage scale a la Peter Sandman to show just how outraged people will be if something goes wrong. Maybe this could be marked with a series of symbols or icons, denoting for example that if you mess up this particular thing, the damage is going to involve new technology, children, chronic effects and personal responsibility.

Posted by Anders3 at October 7, 2006 02:57 PM